A question that regularly comes up when I talk about containers is, “can you specify where the containers/images live on the host?”

This is a good question as the install for docker is great because it’s so simple but bad because well, you don’t get many options to configure.

It makes sense that you’d want to move the containers/images off the C:\ drive for many reasons such as leaving the drive for the OS only but also, say you have a super fast SSD on your host that you want to utilise? OK, spinning up containers is quick but that doesn’t mean we can’t make it faster!

So, can you move the location of container and images on the host?

Well, yes!

There’s a switch that you can use when starting up the docker service that will allow you to specify the container/image backend. That switch is -g

Now, I’ve gone the route of not altering the existing service but creating a new one with the -g switch. Mainly because I’m testing and like rollback options but also because I found it easier to do it this way.

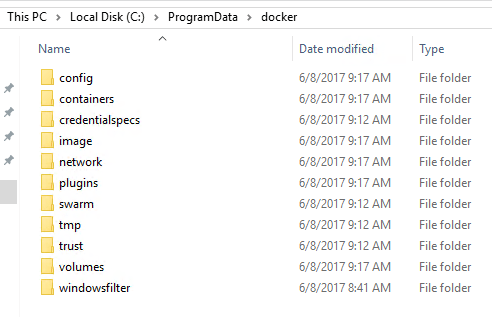

So the default location for containers and images is: – C:\ProgramData\docker

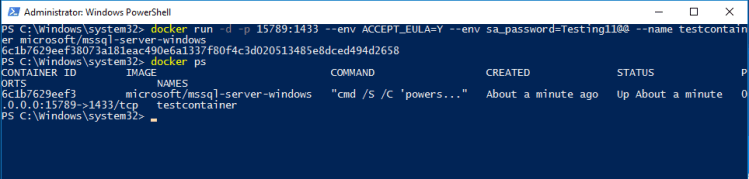

OK, let’s run through the commands to create a new service pointing the container/images backend to a custom location.

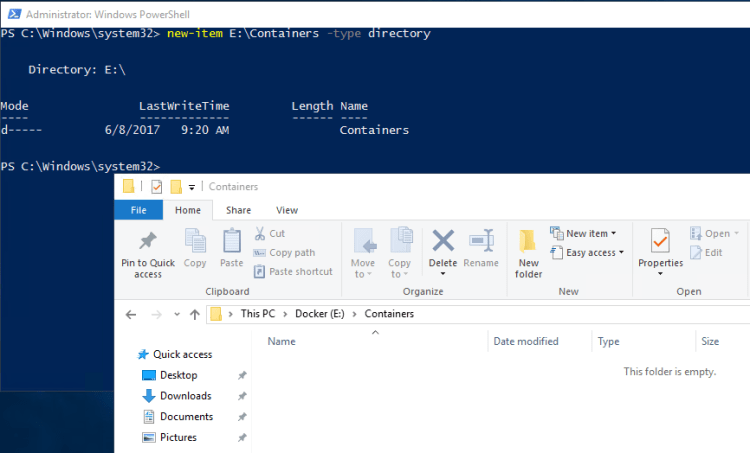

First we’ll create a new directory on the new drive to host the containers (I’m going to use a location on the E: drive on my host as I’m working in Azure and D: is assigned to the temporary storage drive): –

new-item E:\Containers -type directory

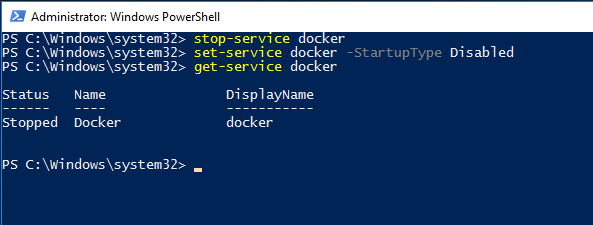

Now stop the existing docker service and disable it: –

stop-service Docker set-service Docker -StartupType Disabled get-service Docker

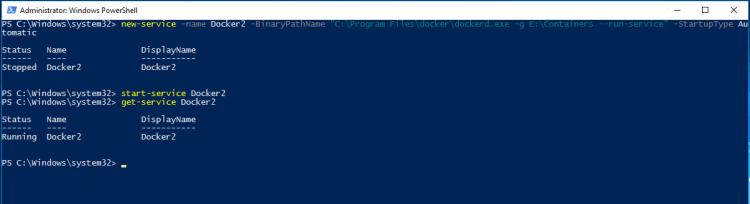

Now we’re going to create a new service pointing the container backend to the new location: –

new-service -name Docker2 -BinaryPathName "C:\Program Files\docker\dockerd.exe -g E:\Containers --run-service" -StartupType Automatic

Now start the new service up: –

start-service Docker2 get-service Docker2

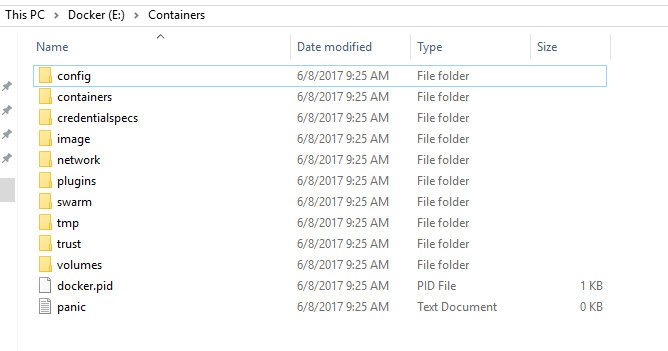

And check the new location: –

Cool, the service has generated the required folder structure upon startup and any new images/containers will be stored here.

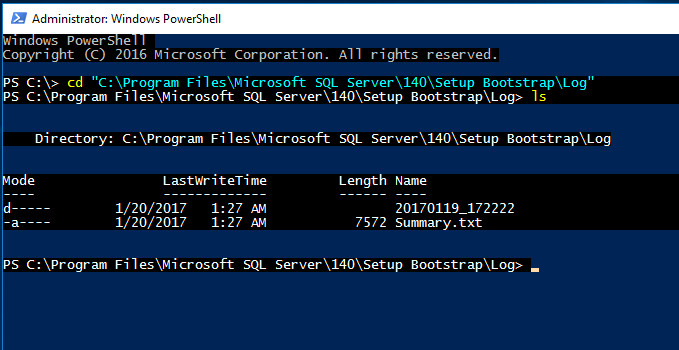

Once thing to mention is that if you have images and containers in the old location they won’t be available to the new service. I’ve tried copying the files and folder in C:\ProgramData\docker to the new location but keep getting access denied errors on the windowsfilter folder.

To be honest, I haven’t spent much time on that as if you want to migrate your images from the old service to the new one you can export out and then load in by following the instructions here.

Thanks for reading!