Last week in Part Two I went through how to create named volumes and map them to containers in order to persist data.

However, there is also another option available in the form of data volume containers.

The idea here is that create we a volume in a container and then mount that volume into another container. Confused? Yeah, me too but best to learn by doing so let’s run through how to use these things now.

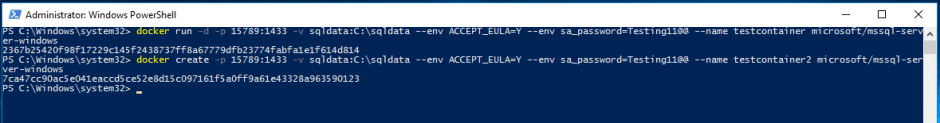

So first we create the data volume container and create a data volume: –

docker create -v C:\SQLServer --name Datastore microsoft/windowsservercore

Note – that we’re not using an image running SQL Server. The windowservercore & mssql-server-windows images use common layers so we can save space by using the windowsservercore image for our data volume container.

Now create a container and mount the directory from data volume container to it: –

docker run -d -p 16789:1433 --env ACCEPT_EULA=Y --env sa_password=Testing11@@ --volumes-from Datastore --name testcontainer microsoft/mssql-server-windows

Actually, let’s also create another container but not start it up: –

docker create -d -p 16789:1433 --env ACCEPT_EULA=Y --env sa_password=Testing11@@ --volumes-from Datastore --name testcontainer2 microsoft/mssql-server-windows

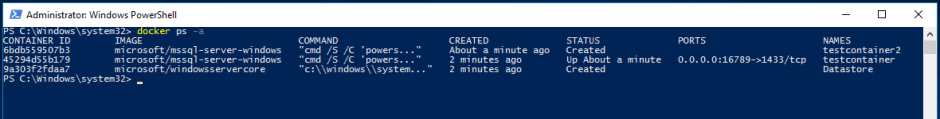

So we now have three containers: –

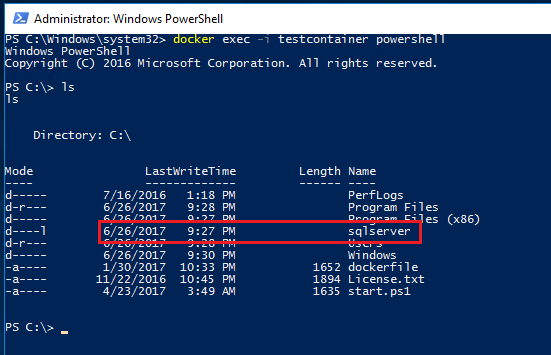

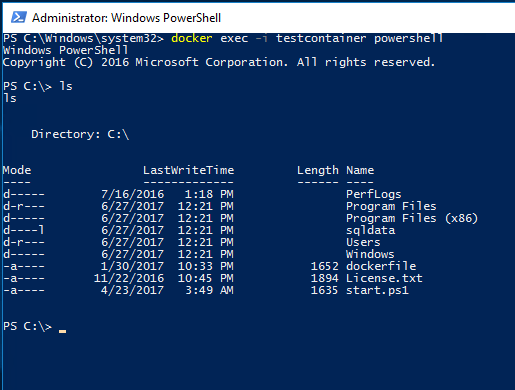

Let’s confirm that the volume is within the first container: –

docker exec -i testcontainer powershell ls

Update – April 2018

Loopback has now been enabled for Windows containers, so we can use localhost,16789 to connect locally. You can read more about it here

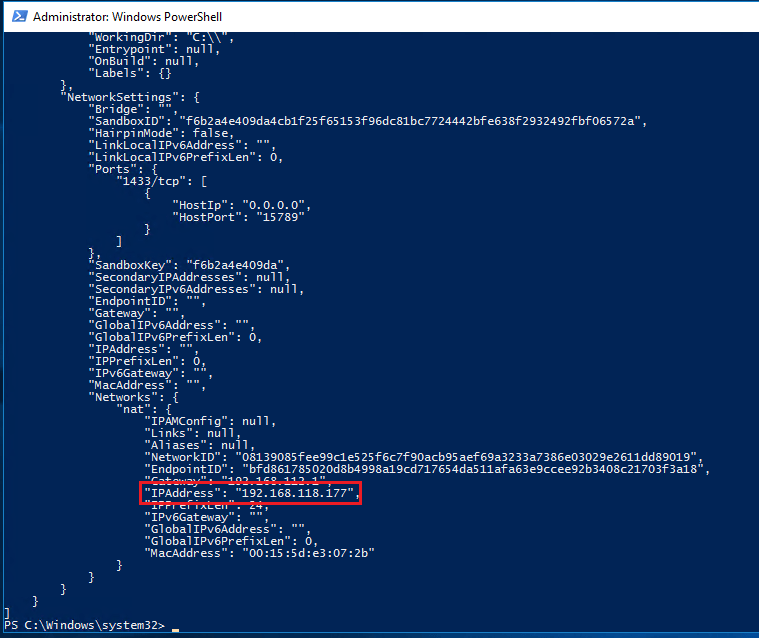

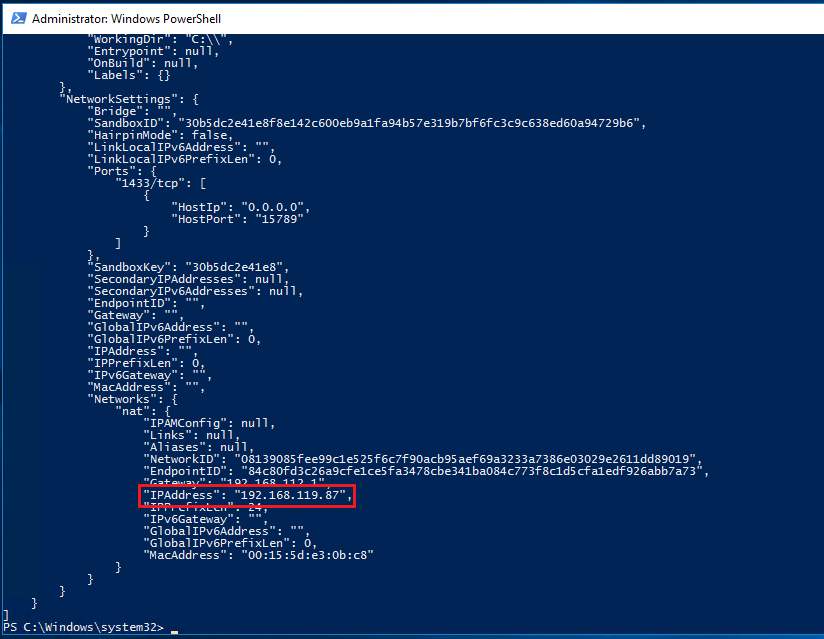

Ok, let’s get the private IP of the first container and connect to it: –

docker inspect testcontainer

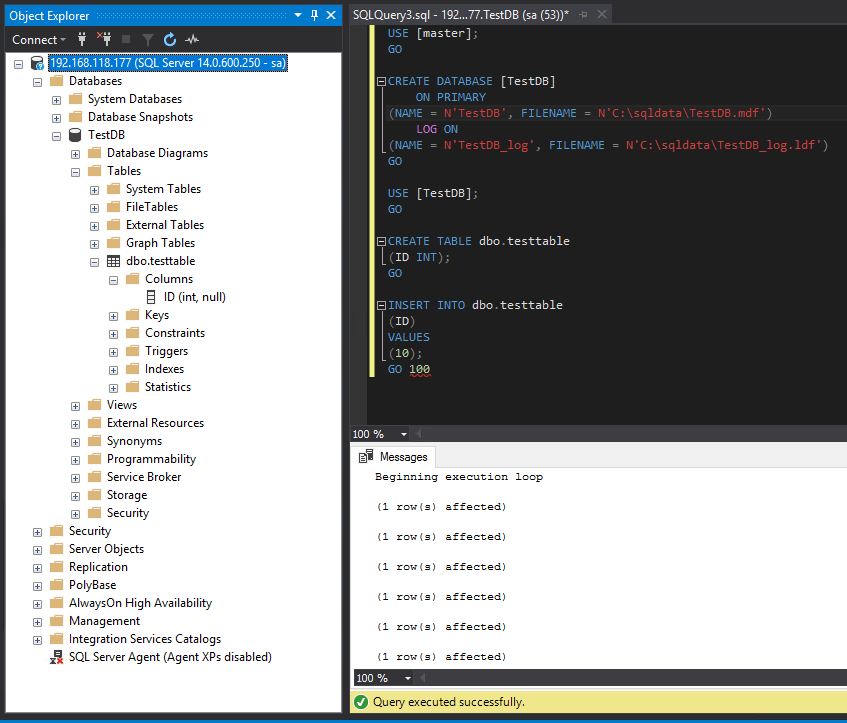

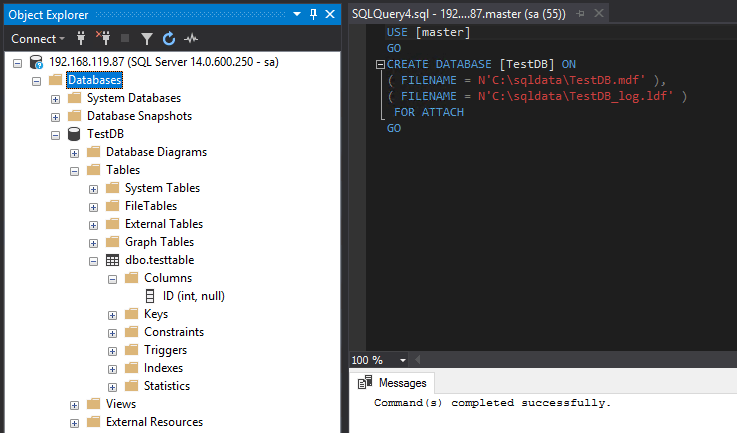

Once you have the IP, connect to the SQL instance within the container and create a database (same as in Part One): –

USE [master];

GO

CREATE DATABASE [TestDB]

ON PRIMARY

(NAME = N'TestDB', FILENAME = N'C:\SQLServer\TestDB.mdf')

LOG ON

(NAME = N'TestDB_log', FILENAME = N'C:\SQLServer\TestDB_log.ldf')

GO

USE [TestDB];

GO

CREATE TABLE dbo.testtable

(ID INT);

GO

INSERT INTO dbo.testtable

(ID)

VALUES

(10);

GO 100

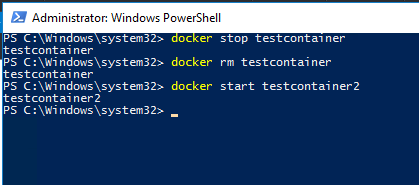

Now, let’s stop the first container and start the second container: –

docker stop testcontainer docker start testcontainer2

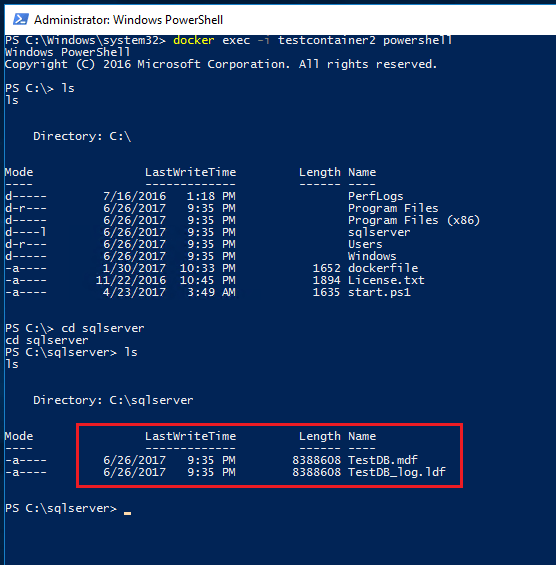

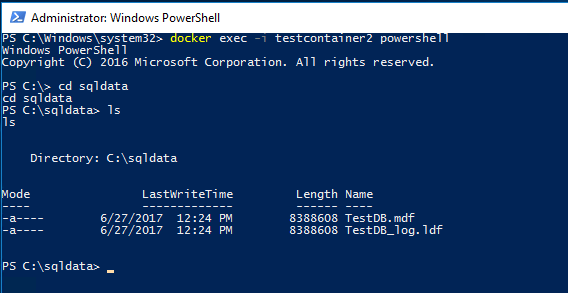

And let’s have a look in the container: –

docker exec -i testcontainer2 powershell ls cd sqlserver ls

So the files of the database that we created in the first container are available to the second container via the data container!

Ok, so that’s all well and good but why would I want to use data volume containers instead of the other methods I covered in Part One & Part Two?

The docker documentation here says that it’s best to use a data volume container however they don’t give a reason as to why!

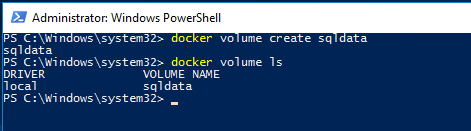

The research that I’ve been doing into this has brought me to discussions where people say that there’s no point in using data volume containers and that just using volumes will do the trick.

I tend to agree that there’s not much point in using data volume containers, volumes will give you everything that you need. But it’s always good to be aware of the different options that a technology can provide.

So which method of data persistence would I recommend?

I would recommend using volumes mapped from the host if you’re going to need to persist data with containers. By doing this you can have greater resilience in your setup by separating your database files from the drive that your docker system is hosted on. I also prefer to have greater control over where the files live as well, purely because I’m kinda anal about these things 🙂

I know there’s an argument about using named volumes as it keeps everything within the docker ecosystem but for me, I don’t really care. I have control over my docker host so it doesn’t matter that there’s a dependency on the file system. What I would say though is, try each of these methods out, test thoroughly and come to decision on your own (there, buck successfully passed!).

Thanks for reading!