Last week in Part One I went through how to mount directories from the host server into a docker container in order to persist data.

However, you don’t have to do this in order to share volumes between containers. Another method is to create named volumes within the docker ecosystem (as it were) and then map those volumes into containers upon creation.

Here’s how to do it.

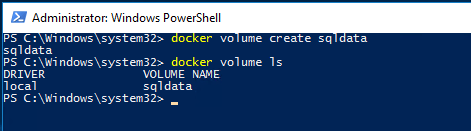

First we create the volume that we want to share within docker: –

docker volume create sqldata

docker volume ls

Now let’s create two containers both referencing the volume. One will be up and running whilst the other remains in the stopped state: –

docker run -d -p 15789:1433 -v sqldata:C\sqldata --env ACCEPT_EULA=Y --env sa_password=Testing11@@ --name testcontainer microsoft/mssql-server-windows docker create -p 15789:1433 -v sqldata:C\sqldata --env ACCEPT_EULA=Y --env sa_password=Testing11@@ --name testcontainer2 microsoft/mssql-server-windows

Let’s have a look in the first container to see if the volume is there: –

docker exec -i testcontainer powershell

Update – April 2018

Loopback has now been enabled for Windows containers, so we can use localhost,15789 to connect locally. You can read more about it here

Ok, it’s there. So grab the private IP address of the container so that we can connect to it in SSMS: –

docker inspect testcontainer

Now we’ll create a database with its files in that location: –

USE [master];

GO

CREATE DATABASE [TestDB]

ON PRIMARY

(NAME = N'TestDB', FILENAME = N'C:\sqldata\TestDB.mdf')

LOG ON

(NAME = N'TestDB_log', FILENAME = N'C:\sqldata\TestDB_log.ldf')

GO

USE [TestDB];

GO

CREATE TABLE dbo.testtable

(ID INT);

GO

INSERT INTO dbo.testtable

(ID)

VALUES

(10);

GO 100

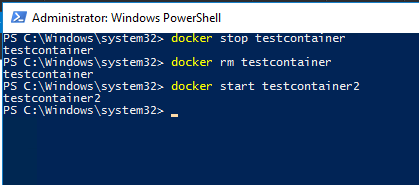

Right, now let’s blow that first container away and spin up the second one: –

docker stop testcontainer docker rm testcontainer docker start testcontainer2

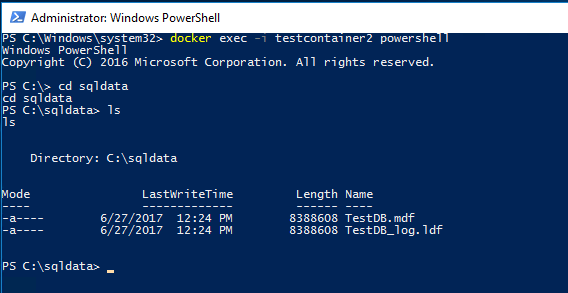

Hmm, all looks good. But let’s check that the volume is there with the database’s files: –

docker exec -i testcontainer2 powershell cd sqldata ls

Cool! The files are there, so let’s connect to the SQL instance within the second container and see if we can attach the database: –

docker inspect testcontainer2

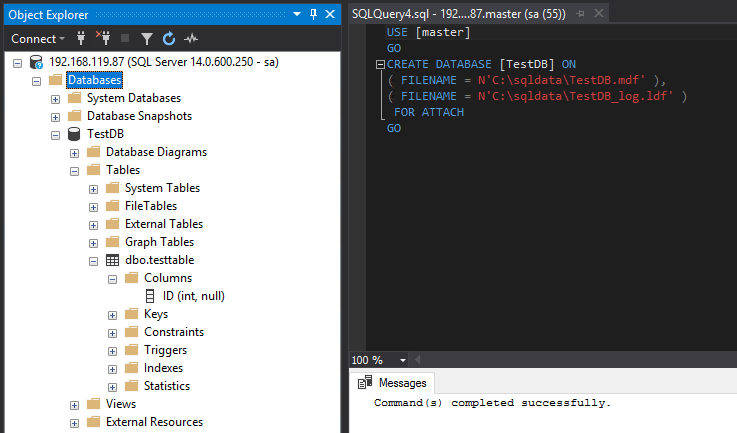

Let’s try the attach: –

USE [master] GO CREATE DATABASE [TestDB] ON ( FILENAME = N'C:\sqldata\TestDB.mdf' ), ( FILENAME = N'C:\sqldata\TestDB_log.ldf' ) FOR ATTACH GO

Awesome stuff! We’ve got a database that was created in another container successfully attached into another one.

So at this point you may be wondering what the advantage is of doing this over mounting folders from the host? Well, to be honest, I really can’t see what the advantages are.

The volume is completely contained within the docker ecosystem so if anything happens to the docker install, we’ve lost the data. OK, OK, I know it’s in C:\ProgramData\docker\volumes\ on the host but still I’d prefer to have more control over its location.

I like the idea of mounted volumes better if I’m honest. I can specify where my database files are with much more control and I can also have access if needed.

However, each to their own personal preference and if you have a good reason for using named volumes over mounted volumes, let me know 🙂

Thanks for reading!

Nice posts! Mounting a volume (instead of host storage) allows you mount a shared/clustered volume. That way the container can run on any node in the cluster, but at that point you want to start looking at docker swarm or Kubernetes. The rabbit hole of the container world just gets deeper and deeper. Fun though