In a previous post I went through how to Push an image to the Azure Container Registry

Now let’s look at using that image to create an Azure Container Image instance.

Azure Container Instances (ACI) are Microsoft’s serverless container technology. They allow us to spin up a container without having to manage the underlying infrastructure (VMs etc). Let’s run through spinning up an ACI now.

First off, I’ll be using the image I pushed up in my previous post. If you haven’t run through doing that, the link is here

OK, so let’s log in to Azure (using the Azure-CLI on Windows Subsystem for Linux): –

az login

In order to store credentials that can be used to access our Azure Container Registry and pull the container image, we first need to create a key vault: –

az keyvault create --resource-group apcontainers1 --name apkeyvault1

Now that the vault is created, we create a service principal and store its credentials in the vault: –

az keyvault secret set \

--vault-name apkeyvault1 \

--name ApContainerRegistry01-pull-pwd \

--value $(az ad sp create-for-rbac \

--name ApContainerRegistry01-pull \

--scopes $(az acr show --name ApContainerRegistry01 --query id --output tsv) \

--role reader \

--query password \

--output tsv)

Then we grab the service principal’s appId which will be the username passed to the Azure Container Registry: –

az keyvault secret set \

--vault-name apkeyvault1 \

--name ApContainerRegistry01-pull-usr \

--value $(az ad sp show --id http://ApContainerRegistry01-pull --query appId --output tsv)

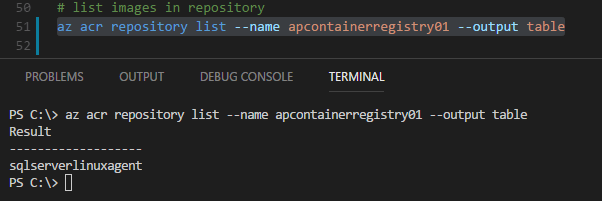

Great stuff. Now let’s confirm the repositories in our Azure Container Registry: –

az acr repository list --name apcontainerregistry01 --output table

We have the image that was pushed up to the ACR in my last post, so let’s deploy that to an Azure Container Instance: –

az container create \

--resource-group apcontainers1 \

--image apcontainerregistry01.azurecr.io/sqlserverlinuxagent:latest \

--registry-login-server apcontainerregistry01.azurecr.io \

--registry-username $(az keyvault secret show --vault-name apkeyvault1 -n ApContainerRegistry01-pull-usr --query value -o tsv) \

--registry-password $(az keyvault secret show --vault-name apkeyvault1 -n ApContainerRegistry01-pull-pwd --query value -o tsv) \

--name testcontainer1 \

--cpu 2 --memory 4 \

--environment-variables ACCEPT_EULA=Y SA_PASSWORD=Testing1122 \

--ip-address public \

--ports 1433

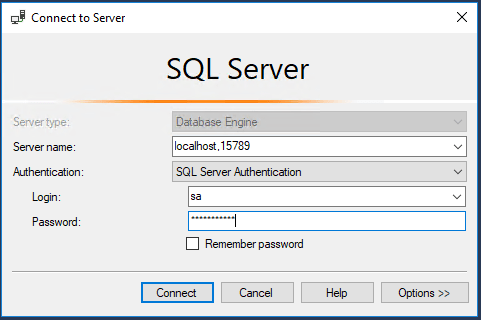

The code should be fairly self explanatory. I’m using the username and password created earlier to access the ACR and am then spin up a container from the sqlserverlinuxagent:latest image. The container has 2 CPUs and 4GB of memory available to it and it will be listening on a public IP address on port 1433 (be very careful with this).

At the time of writing, the only option available for ip-address is public, hopefully further options will be available soon. I will update this blog if/when that happens.

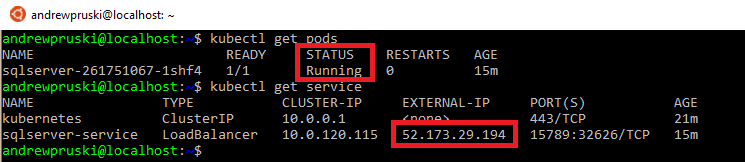

OK, let’s grab the container details: –

az container show --name testcontainer1 --resource-group apcontainers1

Once the provisioning state is succeeded and there’s an IP address, we are good to go.

If you want to view the logs of the container: –

az container logs --name testcontainer1 --resource-group apcontainers1

And we can also remote into the container: –

az container exec --resource-group apcontainers1 --name testcontainer1 --exec-command bash

Finally, to clean-up (delete the container): –

az container delete --name testcontainer1 --resource-group apcontainers1

So, that’s how to deploy a custom container image from the Azure Container Registry to an Azure Container Instance.

Thanks for reading!