Recently I noticed that Microsoft uploaded a new dockerfile to the mssql-docker repository on Github. This dockerfile was under the mssql-server-linux-non-root directory and (you guessed it) allows SQL Server containers to run as non-root.

But why is running a container as root bad? Let’s run through an example.

Using a non-root user: –

Run a SQL Server 2019 container with /etc mounted:-

docker run -d -p 15789:1433 \ --volume /etc:/etc \ --env SA_PASSWORD=Testing1122 \ --env ACCEPT_EULA=Y \ --name testcontainer \ mcr.microsoft.com/mssql/server:2019-RC1-ubuntu

Have a look at the logs: –

docker logs testcontainer

So even though I ran the container as a non-root user, the container is running as root.

Here’s the reason that’s bad. Exec into the container: –

docker exec -it testcontainer bash

Now create a user and add to the super user’s group: –

useradd testuser passwd testuser adduser testuser sudo

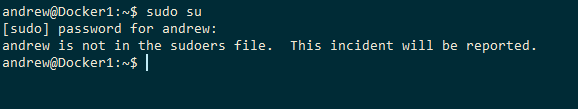

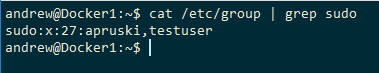

The user has been created and added to the super user’s group within the container. But if we come out of the container and run: –

cat /etc/group | grep sudo

The user is in the super user group on the host! Which means we can do: –

su testuser

Because we mounted the /etc directory into the container, the user created in the container is also created on the host!

And that’s why running containers as root is bad.

EDIT: November 2019

The new SQL Server 2019 run as a non-root user by default, these images are: –

mcr.microsoft.com/mssql/server:2019-GA-ubuntu-16.04

mcr.microsoft.com/mssql/server:2019-GDR1-ubuntu-16.04

So there’s no need to build your own image but the process below will show you how to (if you want to see how it’s done).

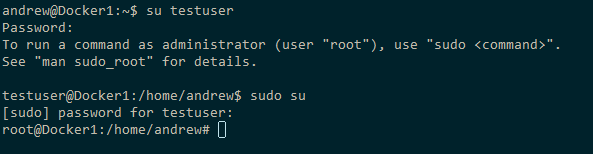

Let’s fix this by running SQL Server 2019 in a non-root container. First thing to do is create a mssql user on the host (you’ll have to run this as a user with sudo rights): –

useradd -M -s /bin/bash -u 10001 -g 0 mssql

N.B. – this user is needed as it’s created in the dockerfile, without it on the host the build will complete but any containers created from the image will crash.

Now, build the image from the dockerfile on Github: –

docker build -t 2019-nonroot .

Let’s try to run this container with /etc mounted: –

docker run -d -p 15799:1433 \ --volume /etc:/etc \ --env SA_PASSWORD=Testing1122 \ --env ACCEPT_EULA=Y \ --name testcontainer2 \ 2019-nonroot

We can see that the container is running as the user mssql and it’s errored out as it does not have access to the /etc directory that we tried to mount!

So now that we have the option to run SQL Server in containers as a non-root user, I would absolutely recommend that you do so.

Thanks for reading!