Normally when I work with SQL instances within containers I treat them as throw-away objects. Any modifications that I make to the databases within will be lost when I drop the container.

However, what if I want to persist the data that I have in my containers? Well, there are options to do just that. One method is to mount a directory from the host into a container.

Full documentation can be found here but I’ll run through an example step-by-step here.

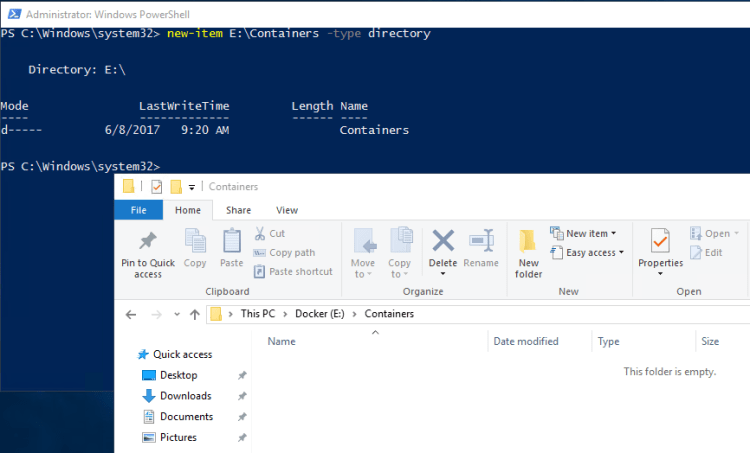

First create a directory on the host that we will mount into the container: –

mkdir D:\SQLServer

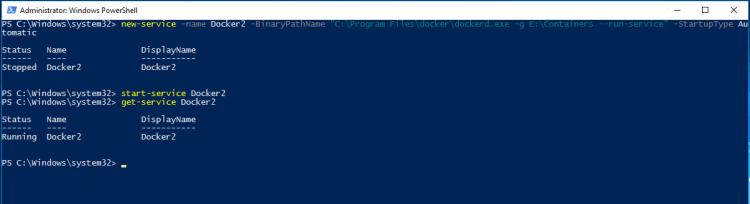

And now build a container using the -v flag to mount the directory: –

docker run -d -p 15789:1433 -v D:\SQLServer:C:\SQLServer --env ACCEPT_EULA=Y --env sa_password=Testing11@@ --name testcontainer microsoft/mssql-server-windows

So that’s built us a container running an empty instance of SQL Server with the D:\SQLServer directory on the host mounted as C:\SQLServer in the container.

Update – April 2018

Loopback has now been enabled for Windows containers, so we can use localhost,15789 to connect locally. You can read more about it here

Now, let’s create a database on the drive that we’ve mounted in the container. First grab the container private IP (as we’re connecting locally on the host): –

docker inspect testcontainer

And use it to connect to the SQL instance within the container:-

Now create the database with its files in the mounted directory : –

USE [master]; GO CREATE DATABASE [TestDB] ON PRIMARY (NAME = N'TestDB', FILENAME = N'C:\SQLServer\TestDB.mdf') LOG ON (NAME = N'TestDB_log', FILENAME = N'C:\SQLServer\TestDB_log.ldf') GO

And let’s create a simple table with some data: –

USE [TestDB]; GO CREATE TABLE dbo.testtable (ID INT); GO INSERT INTO dbo.testtable (ID) VALUES (10); GO 100

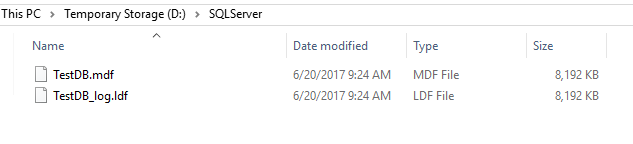

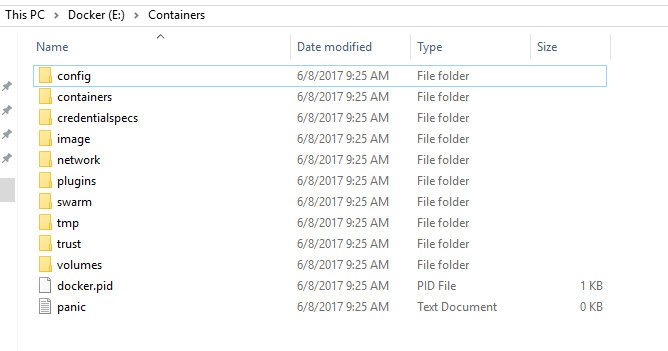

Cool, if we check the directory on the host, we’ll see the database’s files: –

OK, now let’s blow that container away: –

docker stop testcontainer docker rm testcontainer

If you check the host, the directory with the database files should still be there.

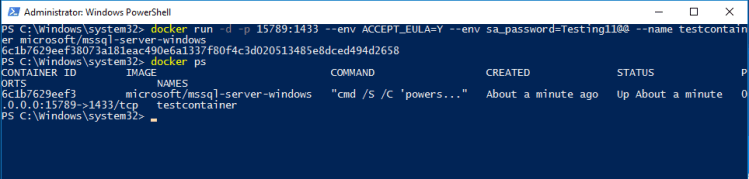

Now, let’s create another container, mounting the directory back in: –

docker run -d -p 15799:1433 -v D:\SQLServer:C:\SQLServer --env ACCEPT_EULA=Y --env sa_password=Testing11@@ --name testcontainer2 microsoft/mssql-server-windows

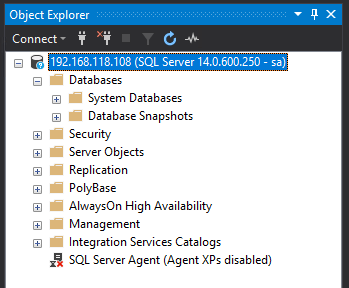

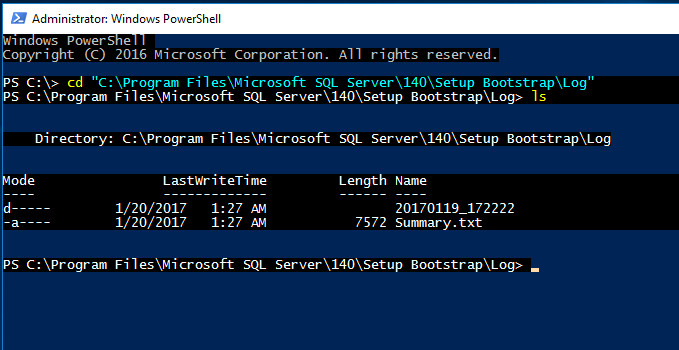

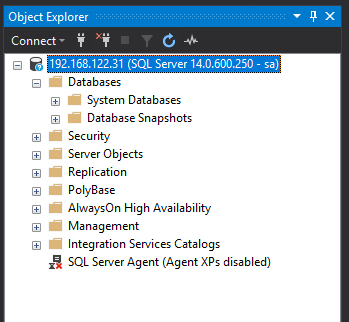

Same as before, grab the private IP and connect into SQL Server: –

No database! Of course, we need to attach it! So…

And there it is!

Cool, so a database created in one container has been attached into a SQL instance running in another. Notice that I didn’t detach the database before stopping and then dropping the container. Now, that doesn’t mean that the process of shutting down a container stops the SQL instance gracefully. It’d be interesting to see what happens if a container is stopped whilst queries are running, I bet if we deleted the container without stopping it first the database would be corrupt.

Anyway, using the -v flag to mount directories from the host into a container is one way of persisting data when using docker.

Thanks for reading!