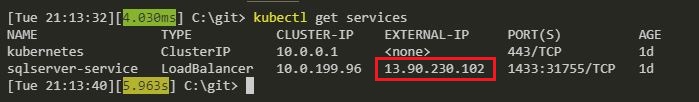

Last week I was working on my Azure Kubernetes Service cluster when I ran into a rather odd issue. I’d created a service with a type of LoadBalancer in order to get an external IP to connect to SQL Server running in a pod from my local machine.

I’ve done this quite a few times at this point so wasn’t expecting anything out of the ordinary.

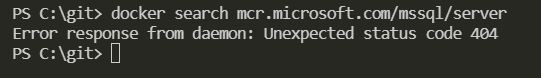

However, my service never got it’s external IP address. It remained in a state of pending: –

N.B. – The images in this post are taken after the issue was resolved as I didn’t think at the time to screen shot everything 😦

I knew something was wrong after about 20 minutes as the IP should have definitely come up by then.

So I delved into the service by running: –

kubectl describe service sqlserver-service

And was greeted with the following: –

Error creating load balancer (will retry): failed to ensure load balancer for service default/sqlserver-service: azure.BearerAuthorizer#WithAuthorization: Failed to refresh the Token for request to https://management.azure.com/subscriptions/subscriptionID/resourceGroups/MC_containers1_SQLK8sCluster1_eastus/providers/Microsoft.Network/loadBalancers?api-version=2017-09-01: StatusCode=0 — Original Error: adal: Refresh request failed. Status Code = ‘401’. Response body: {“error”:”invalid_client”,”error_description”:”AADSTS70002: Error validating credentials. AADSTS50012: Invalid client secret is provided.\r\nTrace ID: 17d1f0ce-6c11-4f8e-895d-29194d973900\r\nCorrelation ID: 3e11d85c-77bf-4041-a41d-267bfd5f066c\r\nTimestamp: 2019-01-23 18:58:59Z”,”error_codes”:[70002,50012],”timestamp”:”2019-01-23 18:58:59Z”,”trace_id”:”17d1f0ce-6c11-4f8e-895d-29194d973900″,”correlation_id”:”3e11d85c-77bf-4041-a41d-267bfd5f066c”}

Yikes! What’s happened there?

I logged a case with MS Support and when they came back to me, they advised that the service principal that is spun up in the background had expired. This service principal is required to allow the cluster to interact with the Azure APIs in order to create other Azure resources.

When a service is created within AKS with a type of LoadBalancer, a Load Balancer is created in the background which provides the external IP I was waiting on to allow me to connect to the cluster.

Because this principal had expired, the cluster was unable to create the Load Balancer and the external IP of the service remained in the pending state.

So I needed to update the service principal so that it was no longer expired. In order to update the service principal I needed two pieces of information. The clientId of the cluster and a secret used for the service principal password. This wasn’t the easiest process in the world so I’ll run through how to do it here.

First, log into Azure:-

az login

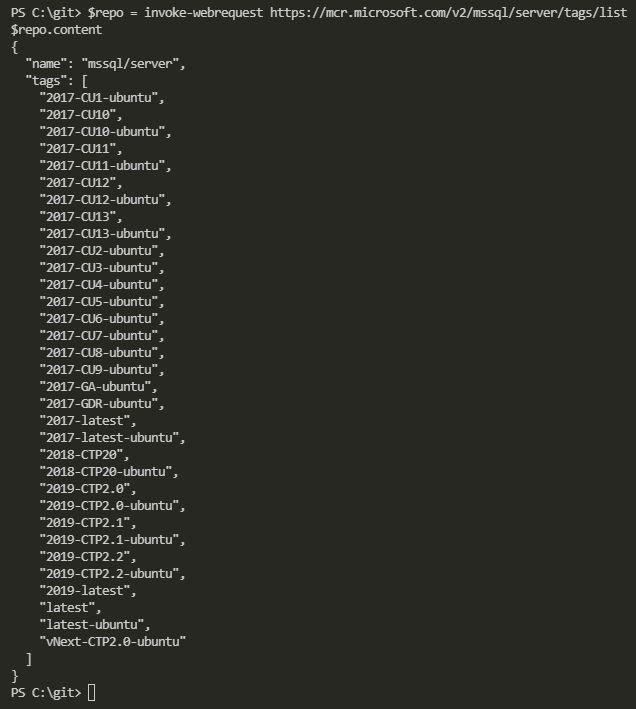

Then get the clientId of your cluster: –

az aks show --resource-group RESOURCEGROUPNAME --name CLUSTERNAME --query "servicePrincipalProfile.clientId" --output tsv

To confirm that the principal is expired: –

az ad sp credential list --id CLIENTID

Check the endDate value highlighted above. If it’s past the current date, that’s your issue!

You may have noticed that mine is set 10 years from now. This is because I’m running these commands to get screenshots after I’ve fixed the issue…I figured 10 years should be long enough 🙂

The way I got the secret was to ssh onto one of the nodes in my cluster. This is a little involved but I’ll go through it step-by-step.

Resources for an AKS cluster are created in a separate resource group (for….reasons). To get that resource group name run: –

az aks show --resource-group RESOURCEGROUPNAME --name CLUSTERNAME --query nodeResourceGroup -o tsv

Then grab the nodes in the cluster (RESOURCEGROUPNAME2 is the output of the above command): –

az vm list --resource-group RESOURCEGROUPNAME2 -o table

And then get the IP address of each node: –

az vm list-ip-addresses --resource-group RESOURCEGROUPNAME2 -o table

OK, now that I had the node details I could copy my SSH public key into one of them.

The ssh keys were generated when I created the cluster using the –generate-ssh-keys flag. If you didn’t specify this you’ll need to generate the keys before continuing on.

So I copied my public key into one of the nodes: –

az vm user update \

--resource-group RESOURCEGROUPNAME2 \

--name NODENAME \

--username azureuser \

--ssh-key-value id_rsa.pub

N.B. – I found it easiest to navigate to the directory that held my ssh keys before running this script

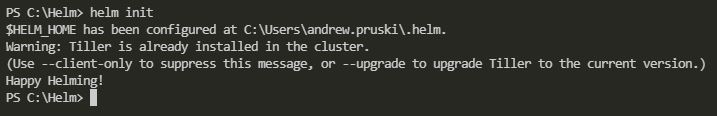

Then I spun up a pod with openssh-client installed so that I could ssh into one of the nodes from within the cluster (the nodes aren’t accessible externally).

To do this I created a docker image from the Alpine:latest image and installed the client. Pushed it to the Docker Hub and then ran: –

kubectl run -it --rm aks-ssh --image=dbafromthecold/alpine_ssh:latest

N.B. – the dbafromthecold/alpine_ssh:latest image is public so this will work for you as well

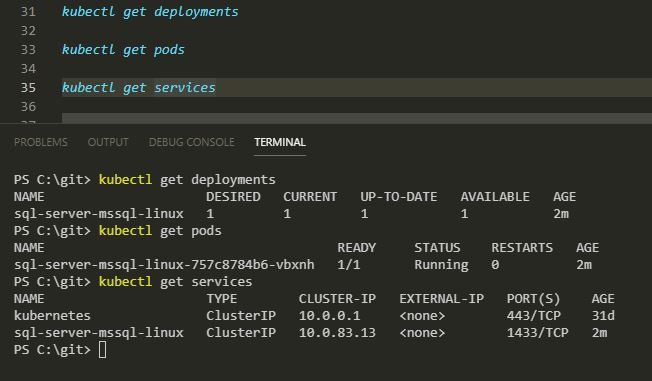

In a separate command prompt I got the name of the pod:-

kubectl get pods

And then copied my private ssh key into the pod:-

kubectl cp id_rsa PODNAME:/id_rsa

Once the key was copied in, I closed that window and went back to the original window where I had run the pod and changed the permissions on the private key: –

chmod 0600 id_rsa

And then I was able to ssh into one of the nodes:-

ssh -i id_rsa azureuser@NODEIPADDRESS

The secret is contained in a json file (as aadClientSecret). To grab it I ran: –

sudo cat /etc/kubernetes/azure.json

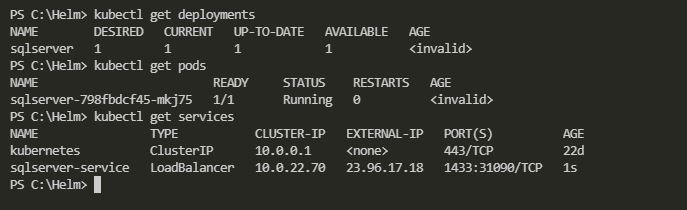

Once I had that information I could exit the node, then the pod, and update the service principal: –

az ad sp credential reset --name CLIENTID--password SECRET --years 10

I confirmed that the service principal had been updated: –

az ad sp credential list --id CLIENTID

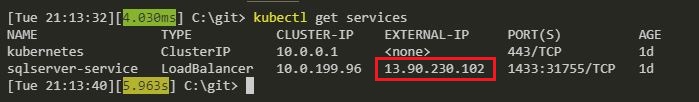

And was then able to deploy a loadbalancer type service, and get an external IP!

kubectl get services

Phew 🙂

Hope that helps anyone who runs into the same issue!