One question that I get asked regularly is, “can you limit the host resources to individual containers?”

This is a great question as you don’t want one container consuming all the resources on your host, starving all the other containers.

It’s actually really simple to limit the resources available to containers, there’s a couple of switches that can be specified at runtime. For example: –

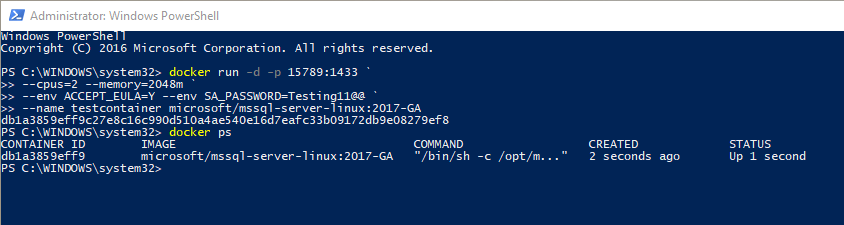

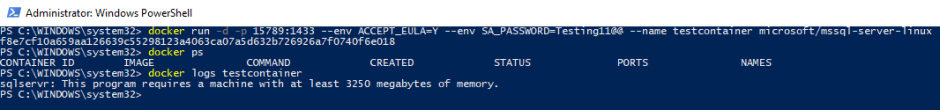

docker run -d -p 15789:1433 ` --cpus=2 --memory=2048m ` --env ACCEPT_EULA=Y --env SA_PASSWORD=Testing11@@ ` --name testcontainer microsoft/mssql-server-linux:2017-GA

What I’ve done here is use the cpus and memory switches to limit that container to a maximum of 2 CPUs and 2GB of RAM. There are other options available, more info is available here.

Simple, eh? But it does show something interesting.

I’m running Docker on my Windows 10 machine, using Linux containers. The way this works is by spinning up a Hyper-V Linux VM to run the containers (you can read more about this here).

When that Linux VM first spins up it only has 2GB of RAM available to it. This isn’t enough to run SQL containers, if you try you’ll get the following error: –

The Linux VM has to have at least 3250MB of RAM available to it in order to run a SQL Server container. But when you run an individual container you can limit that container to less than 3250MB, as I’ve done here.

But how do you decide what limits to impose? Well, there’s always trial and error (it’s served me well) or use the docker stats command.

What I’d recommend doing is spinning up a few containers using docker compose, running some workload against them, and then monitoring using: –

docker stats

This way you can monitor the resources consumed by the containers and make an informed decision when it comes to setting limits.

Thanks for reading!