I previously wrote a post on how to convert a SQL Server Docker image to a Windows Subsystem for Linux distribution.

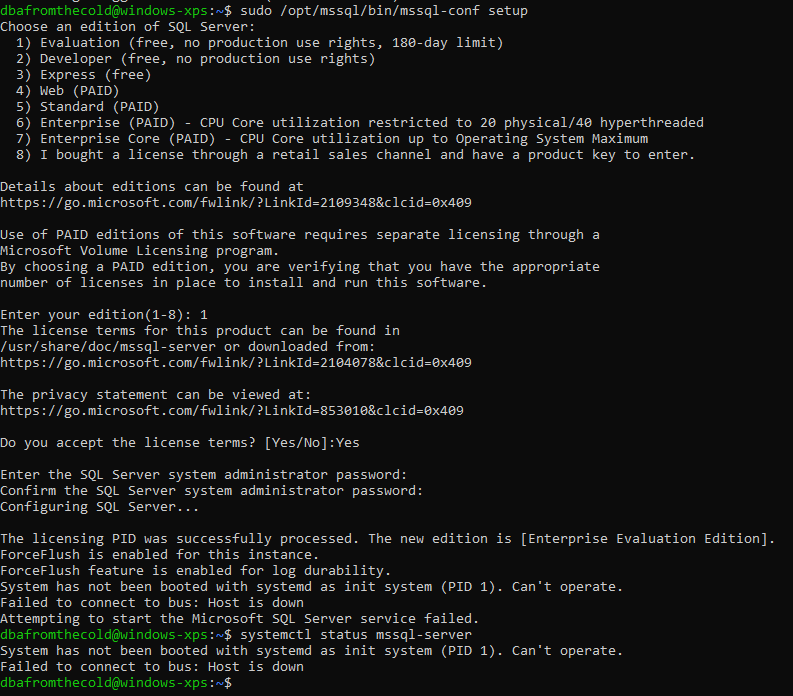

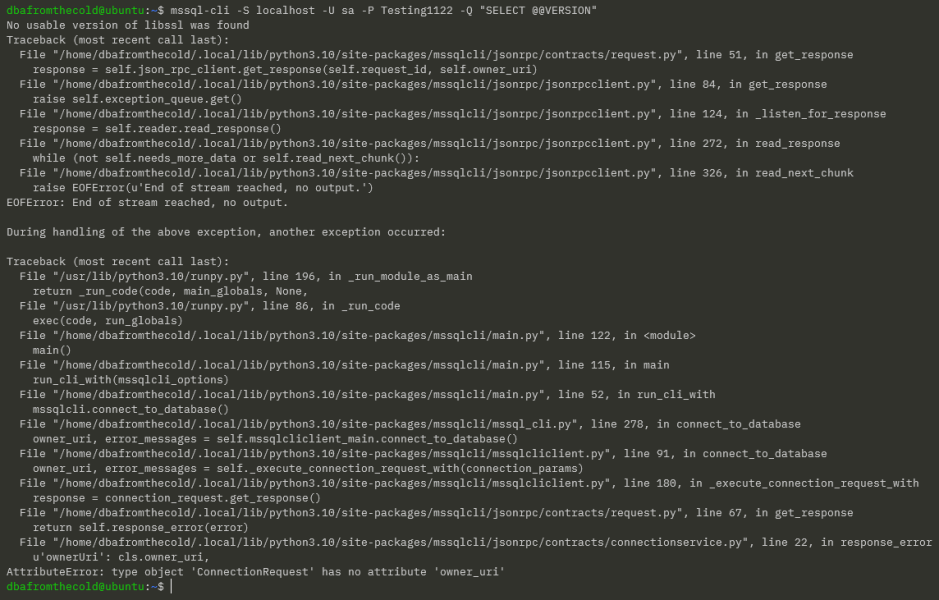

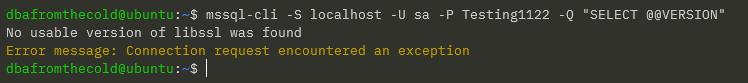

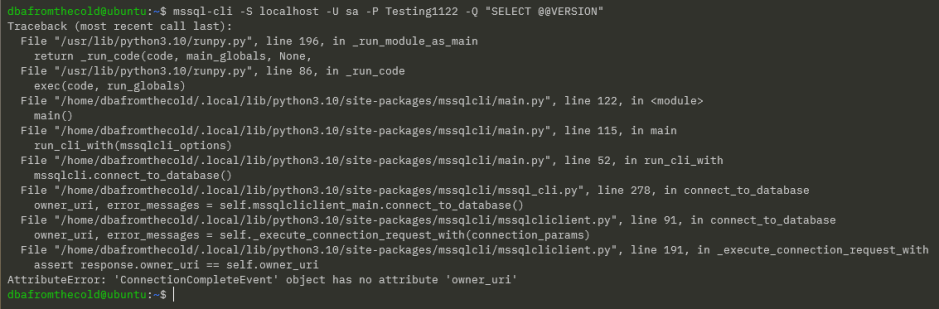

I did this because if you tried to run SQL Server in WSL before now, you’ll be presented with this error: –

This happens because up until now, WSL did not support systemd. However recently Microsoft announced systemd support for WSL here: –

https://devblogs.microsoft.com/commandline/systemd-support-is-now-available-in-wsl/

This is pretty cool and gives us another option for running SQL Server locally on linux (great for testing and getting to grips with the Linux platform).

So how can we get this up and running?

Before going any further, the minimum version required for to get this running is OS version 10.0.22000.0 (a recent version of Windows 11).

I tried getting this to work on Windows 10, but no joy I’m afraid

UPDATE – 2022-11-23 – Microsoft have now made this available on Windows 10 (but I have not tested it I’m afraid) – the announcement is here: –

https://devblogs.microsoft.com/commandline/the-windows-subsystem-for-linux-in-the-microsoft-store-is-now-generally-available-on-windows-10-and-11/

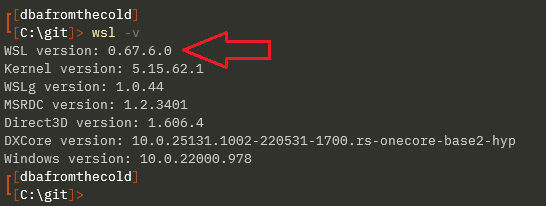

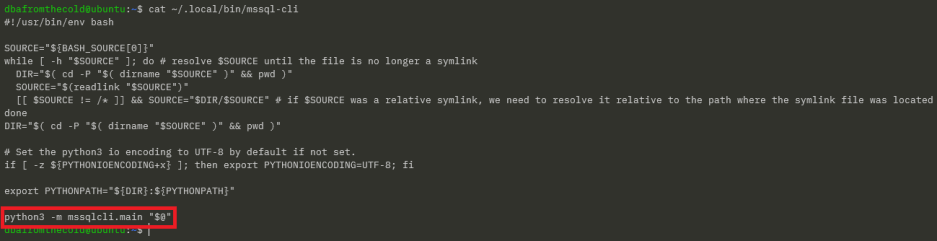

First thing to do is get WSL up to the version that supports systemd. It’s only currently available through the store to Windows Insiders but you can download the installer from here: –

https://github.com/microsoft/WSL/releases

Run the installer once downloaded and then confirm the version of WSL: –

Now install a distro to run SQL Server on from the Microsoft Store: –

N.B. – I’m using Ubuntu 20.04.5 for this…I did try with Ubuntu 22.04 but couldn’t get it to work.

Once installed and log into WSL…update and upgrade: –

sudo apt update sudo apt upgrade

Cool, ok now we are going to enable systemd in WSL. Create a /etc/wsl.conf file and drop in the following: –

[boot] systemd=true

Exit out of WSL and then run: –

wsl --shutdown

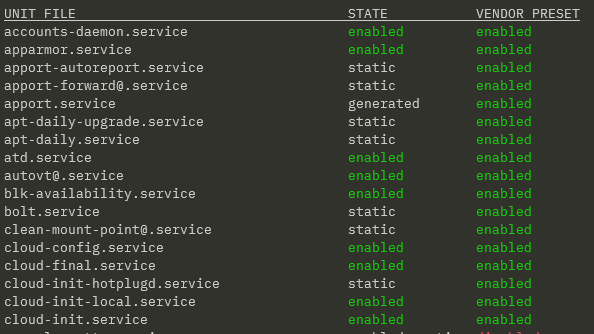

Jump straight back into WSL and run the following to confirm systemd is running: –

systemctl list-unit-files --type=service

Great stuff, now we can run through the usual SQL install process (detailed here)

Import the GPG keys: –

wget -qO- https://packages.microsoft.com/keys/microsoft.asc | sudo apt-key add -

Register the repository: –

sudo add-apt-repository "$(wget -qO- https://packages.microsoft.com/config/ubuntu/20.04/mssql-server-preview.list)"

Update and install SQL Server: –

sudo apt-get update sudo apt-get install -y mssql-server

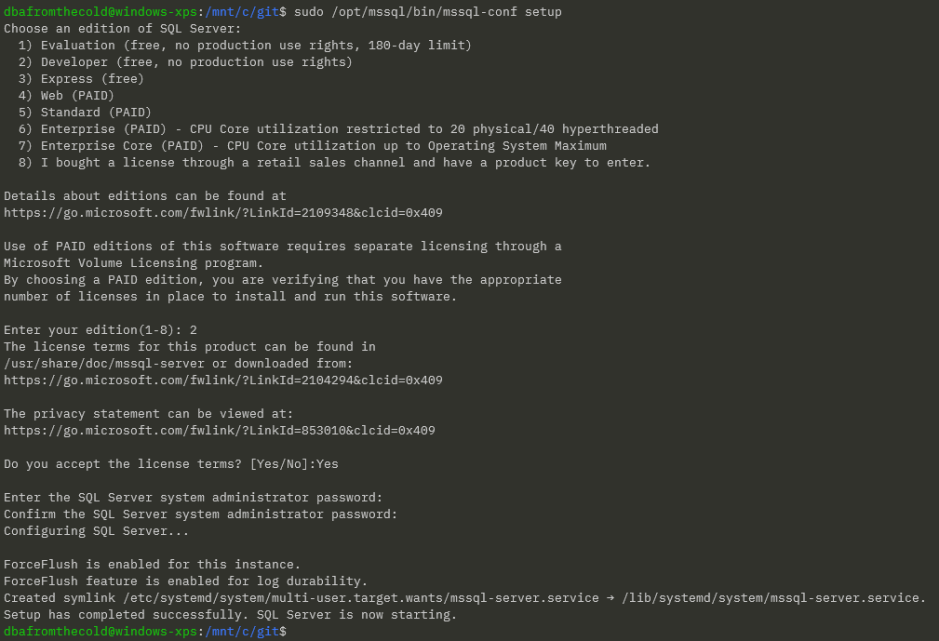

Once that’s complete, you’ll be able to configure SQL Server with mssql-conf: –

sudo /opt/mssql/bin/mssql-conf setup

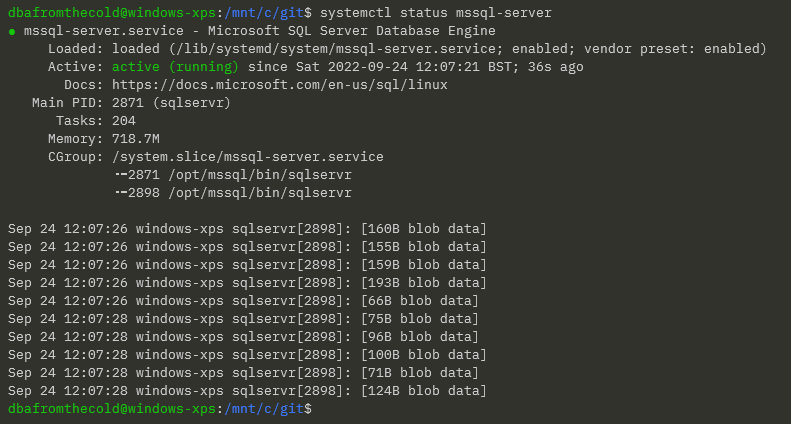

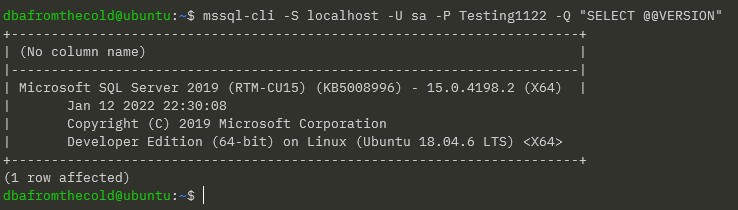

Confirm SQL Server is running: –

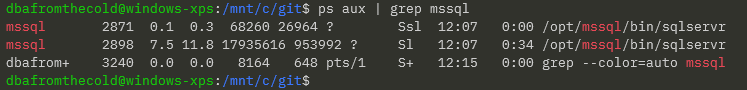

We can also confirm the processes running by: –

ps aux | grep mssql

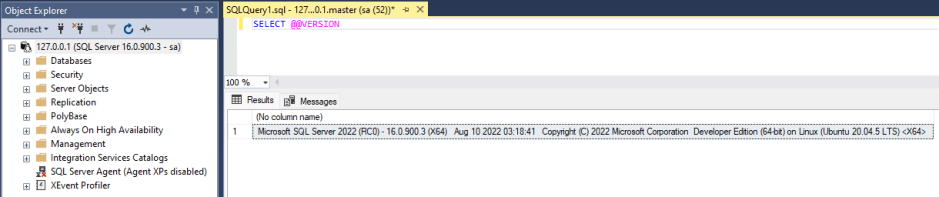

Finally, connect to SQL Server in SSMS (using 127.0.0.1 not localhost): –

And there we have it, SQL Server running in a Windows Subsystem for Linux distro!

Thanks for reading!