Back to some core SQL this week and one of my favourite features, extended events.

Introduced in SQL Server 2008 they are a big improvement on SQL profiler, in the amount of information that can be tracked (more) and the impact that they have on the system (less).

If you are still using profiler, please stop now! 🙂

One of the sessions that I have constantly running on SQL Server instances that I monitor is one to capture information on failed queries. You need to know what queries are failing on your production instances and why.

So let’s go through setting the session up, here’s the script to setup the extended event (remember to change the file paths for the filename and meta-data file!): –

CREATE EVENT SESSION [FailedQueries] ON SERVER

ADD EVENT sqlserver.error_reported

(ACTION(sqlserver.client_app_name, sqlserver.client_hostname,

sqlserver.database_name, sqlserver.sql_text, sqlserver.username)

WHERE ([package0].[greater_than_int64]([severity], (10))))

ADD TARGET package0.event_file (SET

filename = N'C:\SQLServer\XEvents\FailedQueries.xel'

,metadatafile = N'C:\SQLServer\XEvents\FailedQueries.xem'

,max_file_size = (5)

,max_rollover_files = (10))

WITH (STARTUP_STATE = ON)

GO

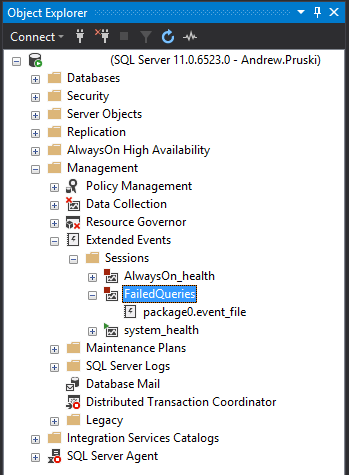

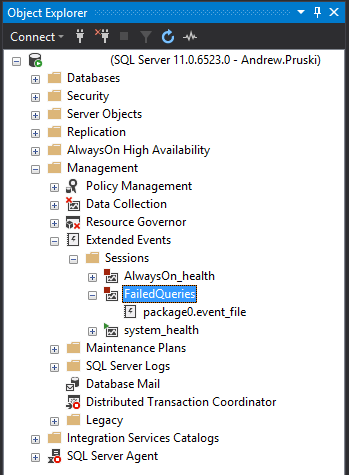

The new extended event can be viewed under Object Explorer > Management > Extended Events in SSMS: –

What this is going to do is create an extended event that will automatically startup when the SQL instance starts and capture all errors recorded that have a severity level greater than 10.

Full documentation on severity levels can be found here but levels 1 through 10 are really just information and you don’t need to worry about them.

I’ve also added in some extra information in the ACTION section (for bits and bobs that aren’t automatically included) and have set the maximum number of files that can be generated to 10, each with a max size of 5MB.

I’ve left pretty much everything else out for clarity but more details on the options that are available when creating sessions can be found here.

Let’s start the session up: –

ALTER EVENT SESSION [FailedQueries] ON SERVER

STATE = START;

GO

Now you can watch the queries be recorded live by right clicking in SSMS and selecting “Watch Live Data”…

…but I want to show you how to parse the events recorded in the file. Let’s test the event by running: –

SELECT 1/0;

Which will obviously fail!

So now we can parse the event, this requires a little XQuery but it’s not too bad (also, remember to change the filepath!): –

SELECT

[XML Data],

[XML Data].value('(/event[@name=''error_reported'']/@timestamp)[1]','DATETIME') AS [Timestamp],

[XML Data].value('(/event/action[@name=''database_name'']/value)[1]','varchar(max)') AS [Database],

[XML Data].value('(/event/data[@name=''message'']/value)[1]','varchar(max)') AS [Message],

[XML Data].value('(/event/action[@name=''sql_text'']/value)[1]','varchar(max)') AS [Statement]

FROM

(SELECT

OBJECT_NAME AS [Event],

CONVERT(XML, event_data) AS [XML Data]

FROM

sys.fn_xe_file_target_read_file

('C:\SQLServer\XEvents\FailedQueries*.xel',NULL,NULL,NULL)) as FailedQueries;

GO

So there we have the XML generated by the extended event and we’ve parsed it into readable columns!

Now with larger files XQuery can be heavy intense on resources so what I tend to do is copy the event’s files to my local machine before parsing; so just be wary when running.

As I said earlier I always have this event running on instances of SQL that I monitor. There really shouldn’t be any impact in doing so as (hopefully) there aren’t that many queries that fail in the instance. As ever though, test this thoroughly before going anywhere near production with it.

Thanks for reading!