One of the best features introduced in SQL Server 2016 was the Query Store. Having the ability to see which queries had regressed (gotten bad plans) over a period of time was pretty much a game changer imho. But many of us are still labouring away on earlier versions of SQL Server so we don’t get to work with this great new feature.

Until now, with the release of OpenQueryStore

If you haven’t heard of OpenQueryStore, it’s an open source project designed to, you guessed it, bring Query Store functionality to earlier versions of SQL Server (2008 to 2014).

When I heard about this project I immediately went onto the GitHub site, downloaded the repository and read through the installation instructions. I’ve been playing around with it for a while and this week was given a preview of the v2.0 release (which has now been released).

v2.0 provides two different install options for OpenQueryStore, Classic or Centralized.

Classic behaves in a similar fashion to Query Store in 2016 by monitoring an individual database whereas Centralized is installed in one “management” database and you specify which databases in the SQL instance you wish to monitor.

I went for Centralized mode and installation is a cinch, just download the repo and then run the Install.ps1 script: –

.\Install.ps1 -SqlInstance "SQL2012" -Database "DBA" -OQSMode "Centralized" -SchedulerType "SQL Agent"

After the script has run, the installer gives advice on what to do next: –

So I needed to INSERT a row into oqs.[monitored_databases] for each database I wanted to monitor, setup a schedule for the SQL Agent job (I set the job to run every minute), then run the UPDATE against oqs.[collection_metadata] and OpenQueyStore will begin collecting data.

A word of caution here. I used the SQL Agent data collection method. There is another option to run using Service Broker but if you do this the install script will run: –

ALTER DATABASE [<your db>] SET ENABLE_BROKER;

This requires an exclusive lock on the database so watch out!

After a while you’ll be able to run the reports that come with the download to get an overview of your system. This is the main dashboard: –

OpenQueryStore Main Dashboard

Here’s the Wait Stats report: –

OpenQueryStore Wait Statistics

These reports are great for a quick overview of the database monitored. Nicely laid out and with a quick glance I can see if there are any immediate causes for concern.

However, the real benefit of OpenQueryStore is in the data collected. The installation creates the following objects within the database: –

- [oqs].[activity_log]

- [oqs].[collection_metadata]

- [oqs].[intervals]

- [oqs].[monitored_databases]

- [oqs].[plan_dbid]

- [oqs].[plans]

- [oqs].[queries]

- [oqs].[query_runtime_stats]

- [oqs].[wait_stats]

So there’s an absolute wealth of data to dig through! The reports provide query IDs so I can drop one into say: –

DECLARE @queryID SMALLINT;

SELECT TOP 1

rs.[query_id]

,rs.[interval_id]

,rs.[last_execution_time]

,rs.[execution_count]

,rs.[avg_rows]

,rs.[last_logical_reads]

,rs.[avg_logical_reads]

,rs.[last_logical_writes]

,rs.[avg_logical_writes]

,q.[query_statement_text]

,p.[plan_handle]

FROM [oqs].[query_runtime_stats] rs

INNER JOIN [oqs].[Queries] q ON rs.[query_id] = q.[query_id]

INNER JOIN [oqs].[Plans] p ON q.[plan_id] = p.[plan_id]

WHERE rs.[query_id] = @queryID

ORDER BY rs.[interval_id] DESC;

GO

And then view all the execution stats of this query. Pretty cool, eh?

If you’re working with SQL Server versions 2008 to 2014 I’d highly recommend that you install this on a development box and start investigating the data that it’s collecting. As with any new system, set it up and monitor to see what it’s doing but I haven’t seen anything untoward.

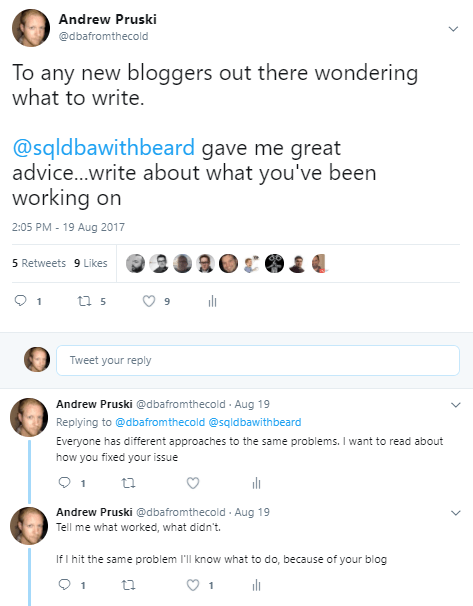

The project is in (very) active development so keep an eye on the twitter account for more updates.

Thanks for reading!