NOVEMBER 2017 UPDATE – Microsoft has now released a new version of Azure Container Services (AK) specifically for Kubernetes. I’ve blogged about it here

I’ve been meaning to get to grips with Kubernetes for a while now but I have to admit, I was completely overwhelmed when I first approached the subject. There’s so much information and documentation out there, it’s difficult to know where to start.

What really helped was James Anderson’s (t) excellent post on Orchestrating SQL Server with Kubernetes

After working through the instructions in James’ post and playing with Minikube for a while, I wanted to try setting up and configuring a larger Kubernetes cluster.

Back in July Microsoft announced Azure Container Services (ACS) so I started learning how to spin up SQL Server containers in a Kubernetes cluster in that environment.

This post will detail how to build a three node Kubernetes cluster, running a SQL Server container in ACS.

In order to follow the steps in this post you will need Bash on Windows installed and a Azure account

The first thing to do is install azure-cli: –

echo "deb [arch=amd64] https://packages.microsoft.com/repos/azure-cli/ wheezy main" | \

sudo tee /etc/apt/sources.list.d/azure-cli.list

sudo apt-key adv --keyserver packages.microsoft.com --recv-keys 417A0893

sudo apt-get install apt-transport-https

sudo apt-get update && sudo apt-get install azure-cli

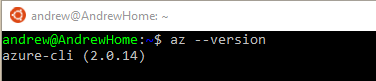

Confirm the install: –

az --version

Now log in to Azure by running the following: –

az login

Bit of an odd login process imho. Copy the link into a web browser and then enter the code when prompted. You’ll then be taken to the Azure login page, enter your details and then close the page when prompted.

I don’t really understand why a username and password aren’t enough but anyway, once logged in we can then start building a cluster.

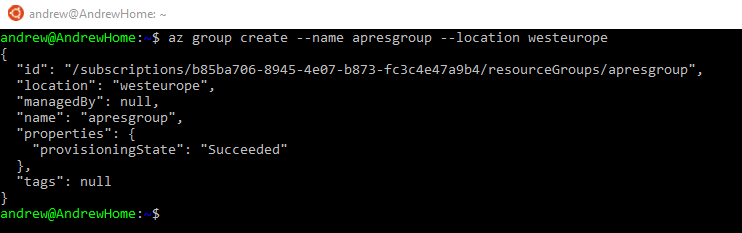

First thing to create is a resource group to hold all the objects in the cluster: –

az group create --name apresgroup --location westeurope

Now the cluster can be created.

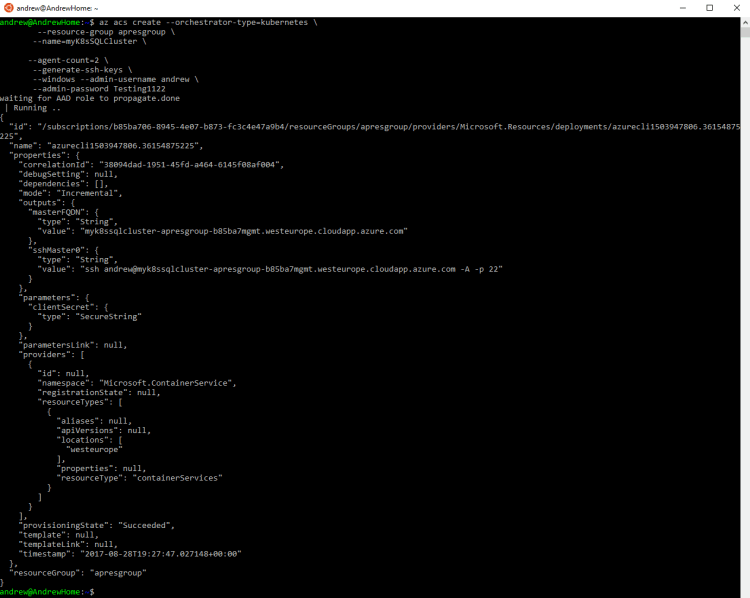

The following script will create a three node Kubernetes cluster. With one linux master node and two windows nodes (where we will run our SQL container).

To setup the cluster run (this will take some time as it’s creating VMs and all the other object required for the cluster): –

az acs create --orchestrator-type=kubernetes \

--resource-group apresgroup \

--name=myK8sSQLCluster \

--agent-count=2 \

--generate-ssh-keys \

--windows --admin-username andrew \

--admin-password Testing1122

Great stuff, we have a three node cluster up and running in ACS! The next thing to do is install kubectl which is a command line interface for managing Kubernetes clusters.

To install kubectl run: –

az acs kubernetes install-cli

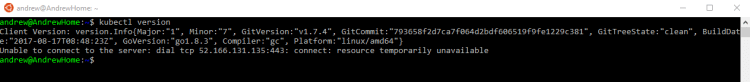

Verify the install (don’t worry about the error): –

kubectl version

Cool, so far so good. Next thing to do is connect kubectl to the cluster.

To allow kubectl to connect to cluster: –

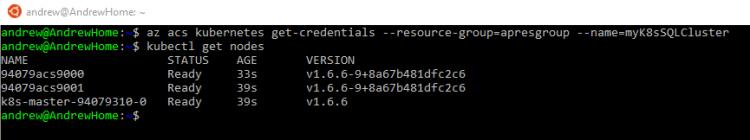

az acs kubernetes get-credentials --resource-group=apresgroup --name=myK8sSQLCluster

Ok, we’re connected. Let’s have a look at the cluster nodes.

To view the nodes in the cluster run:-

kubectl get nodes

Excellent we have our three node cluster, our master and two agents. Now we can build our container running SQL Server.

To do this we will create a yaml file locally which will contain the configuration of our container.

So create a yaml file that will spin up the containers in a Kubernetes pod: –

nano sqlserver.yaml

And enter the following into the file: –

apiVersion: v1

kind: Pod

metadata:

name: sqlserver

labels:

name: sqlserver

spec:

containers:

- name: sqlserver1

image: microsoft/mssql-server-windows:latest

ports:

- containerPort: 1433

env:

- name: SA_PASSWORD

value: "Testing1122"

- name: ACCEPT_EULA

value: "Y"

nodeSelector:

beta.kubernetes.io/os: windows

This will create one pod which will have one container running SQL Server 2017. We can use the file to spin up the pod with the container by running: –

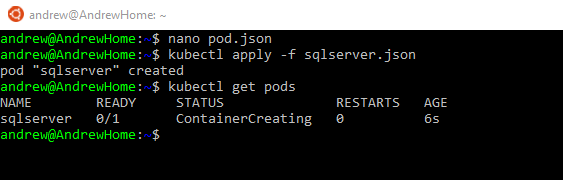

kubectl apply -f sqlserver.yaml

The pod will be created quite quickly but it takes a bit of time for the container within it to be spun up (9 minutes in my setup). This is probably due to the fact that the process has to pull the container image down from the docker hub.

To check the status of the pod/container run: –

kubectl get pods

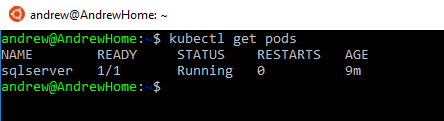

Once the container has been spun up, the pod will have a status of running: –

Great stuff! All looks good! We have a pod up and running. But is SQL Server?

The following script will check that SQL is running within the pod: –

kubectl logs sqlserver

Looks pretty good to me! SQL is up and has accepted the config value within our yaml file to change the SA password. But how are we going to connect to it?

What we need to do now is define a Kubernetes service. A service is a level of abstraction over pods to allow connections to the containers within the pods regardless of where the pod is located in the cluster. So let’s setup a service.

To do this, create a yaml file locally to define the service: –

nano service.yaml

Enter the following code into the yaml file: –

kind: Service

apiVersion: v1

metadata:

name: sqlserver-service

spec:

ports:

- name: sqlserver

port: 1433

targetPort: 1433

selector:

name: sqlserver

type: LoadBalancer

Note the type of service we’re creating. A type of LoadBalancer does exactly what you expect. It creates a Azure Load Balancer to allow external connections into the pods mapped to the service.

Also note that with the all these objects I’m not trying to do anything fancy with the ports that they’re listening on. I’m setting all the ports to the SQL Server default of 1433.

OK, run the following to create the service: –

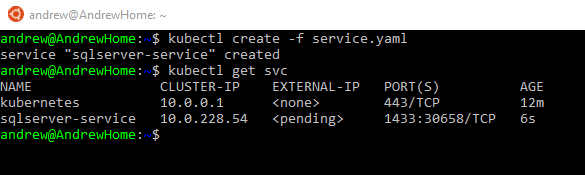

kubectl create -f service.yaml

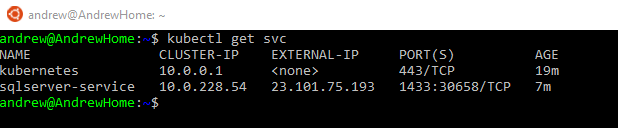

Now we’re waiting for the service to be assigned an external IP that we can use to connect to SQL Server.

To check the status of the service: –

kubectl get svc

After a period of time (7 minutes in my setup) the service will be assigned an external IP address: –

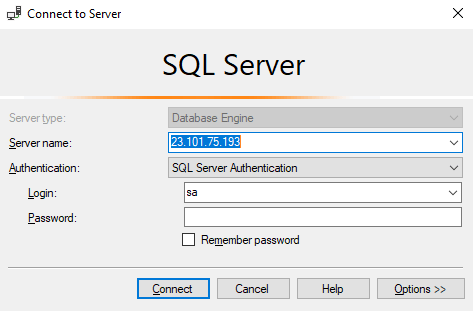

Excellent stuff! We can now drop that external IP into SSMS to connect to SQL: –

Enter in the sa username and password that we defined in the yaml file used to create the pod and boom!

We’re connected 🙂

So that’s how to setup SQL Server running in a container on a Kubernetes cluster in Azure Container Services. Phew!

This is a really simple setup, there’s so much more to explore with Kubernetes and ACS but I hope that gives you a good grounding to be able to play around with this yourself.

Hopefully in the next few weeks I’ll be able to write a couple more posts about exploring what this technology stack has to offer.

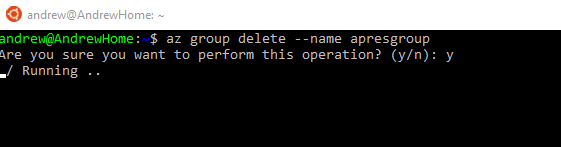

By the way, if you don’t want to leave everything running after following these steps there’s a simple command that will delete all the objects created: –

az group delete --name apresgroup

Thanks for reading!