Continuing on my series in working with Docker on Windows, I noticed that I always open up a remote powershell window when working with Docker on servers. Nothing wrong with this, if you want to know how to do that you can follow my instructions here.

However what if we want to connect to the Docker engine remotely? There’s got to be a way to do that right?

Well it’s not quite so straightforward, but there is a way to do it involving a custom image downloaded from the Docker Hub (built by Stefan Scherer [g|t]) whichs creates TLS certs to allow remote connections.

EDIT – I should point out that this is a method of administering a remote docker engine securely. You can expose a docker tcp endpoint and connect without using TLS certificates but given that docker has no built-in security, I’m not going to show you how to do that 🙂

Anyway, let’s go through the steps.

Open up a admin powershell session on your server and navigate to the root of the C: drive.

First we’ll create a folder to download the necessary certificates to: –

cd C:\ mkdir docker

Now we’re going to follow some of the steps outlined by Stefan Scherer here

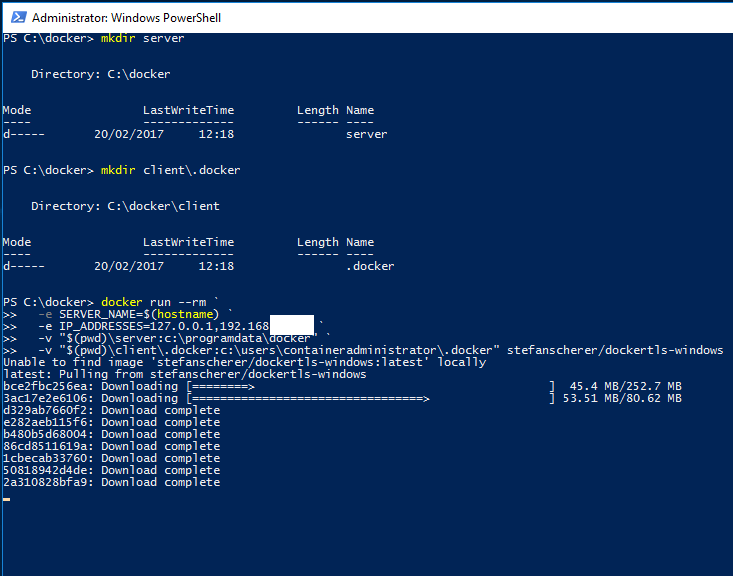

So first, we need to create a couple more directories: –

cd C:\docker mkdir server\certs.d mkdir server\config mkdir client\.docker

And now we’re going to download a image from Stephan’s docker hub to create the required TLS certificates on our server and drop them in the folders we just created (replace the second IP address with the IP address of your server): –

docker run --rm ` -e SERVER_NAME=$(hostname) ` -e IP_ADDRESSES=127.0.0.1,192.168.XX.XX ` -v "$(pwd)\server:c:\programdata\docker" ` -v "$(pwd)\client\.docker:c:\users\containeradministrator\.docker" stefanscherer/dockertls-windows dir server\certs.d dir server\config dir client\.docker

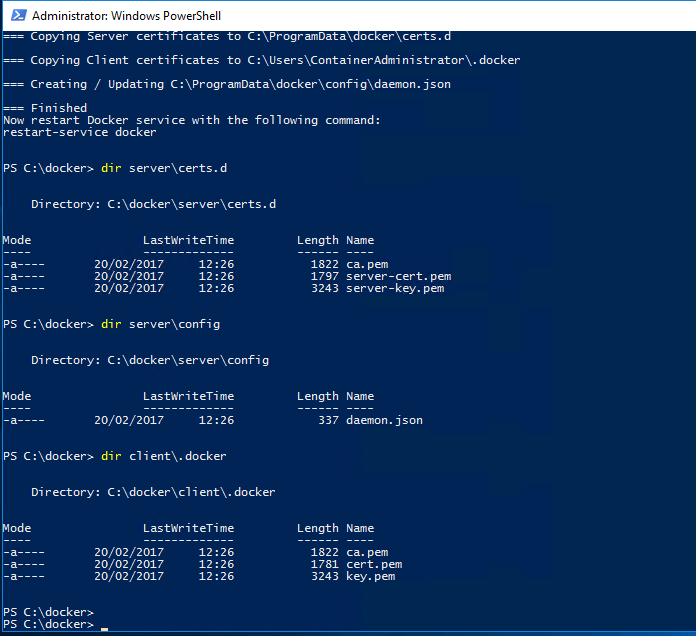

Once complete you’ll see: –

Now we need to copy the created certs (and the daemon.json file) to the following locations: –

mkdir C:\ProgramData\docker\certs.d copy-item C:\docker\server\certs.d\ca.pem C:\ProgramData\docker\certs.d copy-item C:\docker\server\certs.d\server-cert.pem C:\ProgramData\docker\certs.d copy-item C:\docker\server\certs.d\server-key.pem C:\ProgramData\docker\certs.d copy-item C:\docker\server\config\daemon.json C:\ProgramData\docker\config

Also open up the daemon.json file and make sure it looks like this: –

{

"hosts": [

"tcp://0.0.0.0:2375",

"npipe://"

],

"tlscert": "C:\\ProgramData\\docker\\certs.d\\server-cert.pem",

"tlskey": "C:\\ProgramData\\docker\\certs.d\\server-key.pem",

"tlscacert": "C:\\ProgramData\\docker\\certs.d\\ca.pem",

"tlsverify": true

}

Now restart the docker engine: –

restart-service docker

N.B. – If you get an error, have a look in the application event log. The error messages generated are pretty good in letting you know what’s gone wrong (for a freaking change…amiright??)

Next we need to copy the docker certs to our local machine so that we can reference them when trying to connect to the docker engine remotely

So copy all the certs from C:\ProgramData\docker\certs.d to your user location on your machine, mine is C:\Users\Andrew.Pruski\.docker

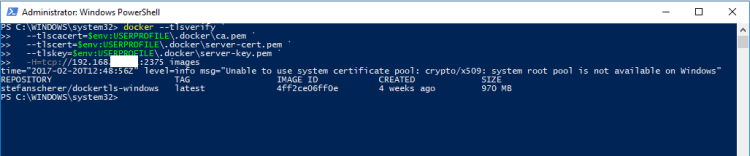

We can then connect remotely via: –

docker --tlsverify ` --tlscacert=$env:USERPROFILE\.docker\ca.pem ` --tlscert=$env:USERPROFILE\.docker\server-cert.pem ` --tlskey=$env:USERPROFILE\.docker\server-key.pem ` -H=tcp://192.168.XX.XX:2375 version

Remember that you’ll need to open up port 2375 on the server’s firewall and you’ll need the Docker client on your local machine (if not already installed). Also Microsoft’s article advises that the following warning is benign: –

level=info msg=”Unable to use system certificate pool: crypto/x509: system root pool is not available on Windows”

Whatever that means. Maybe I’ll just stick to the remote powershell sessions 🙂

Thanks for reading!