UPDATE – JANUARY 2026 – This feature has now been moved to stable in Kubernetes v1.35…full details are here: –

https://kubernetes.io/docs/tasks/configure-pod-container/resize-container-resources/

Whilst this is great unfortunately I did a bit more digging with regards to SQL Server (which OK, I should have done initially) and it seems that SQL Server will not see the new limits without a restart. So we can increase the limits in the pod without a restart but SQL will not see them.

My recommendation is to set the resizePolicy to RestartContainer in the manifest: –

resizePolicy: - resourceName: cpu restartPolicy: RestartContainer - resourceName: memory restartPolicy: RestartContainer

Then when increasing the memory via: –

kubectl patch pod mssql-statefulset-0 --subresource resize --patch `

'{\"spec\":{\"containers\":[{\"name\":\"mssql-container\", \"resources\":{\"requests\":{\"memory\":\"4096Mi\"}, \"limits\":{\"memory\":\"4096Mi\"}}}]}}'

This will restart the container within the pod, not the whole pod which is quicker but does mean a slight outage.

ORIGINAL ARTICLE

One of the new features in Kubernetes v1.33 is the ability to resize CPU and memory resources for containers online, aka without having to recreate the pod the container is running in. In the past, when adjusting a pod’s resources, Kubernetes would delete the existing pod and create a new one via a controller.

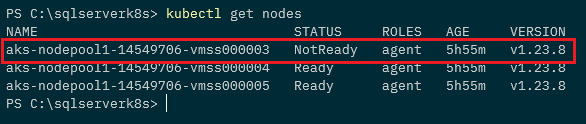

Not a problem for applications that can have multiple replicas running, but for SQL Server this would cause a disruption as we (generally) only have one pod running SQL Server in a statefulset. Let’s see this in action.

First we’ll deploy this simple statefulset to Kubernetes: –

apiVersion: apps/v1

kind: StatefulSet

metadata:

name: mssql-statefulset

spec:

serviceName: "mssql"

replicas: 1

podManagementPolicy: Parallel

selector:

matchLabels:

name: mssql-pod

template:

metadata:

labels:

name: mssql-pod

spec:

securityContext:

fsGroup: 10001

containers:

- name: mssql-container

image: mcr.microsoft.com/mssql/server:2022-CU16-ubuntu-20.04

ports:

- containerPort: 1433

name: mssql-port

env:

- name: ACCEPT_EULA

value: "Y"

- name: MSSQL_SA_PASSWORD

value: "Testing1122"

resources:

requests:

memory: "2048Mi"

cpu: "2000m"

limits:

memory: "2048Mi"

cpu: "2000m"

The important part here is the CPU and memory settings: –

resources:

requests:

memory: "2048Mi"

cpu: "2000m"

limits:

memory: "2048Mi"

cpu: "2000m"

N.B. – you may have noticed that the limits and requests here are the same value. This is to set a “Guaranteed” Quality of Service for the pod…it’s a recommended best practice for SQL Server in Kubernetes, more info is here: –

https://learn.microsoft.com/en-us/sql/linux/sql-server-linux-kubernetes-best-practices-statefulsets

Let’s apply that manifest: –

kubectl apply -f ./sqlserver.yaml

In the past we had to edit our statefulset to increase these values: –

kubectl edit sts mssql-statefulset

This would recreate the pod with the new limits/requests: –

But now as of Kubernetes v1.33 we can scale pods without a restart! See here for the more info: –

https://kubernetes.io/docs/tasks/configure-pod-container/resize-container-resources/

One thing to note…the code to do this in the official docs will error out if you’re running kubectl on Windows (sigh). In order for this to run successfully on Windows a backslash has to be added before any double quote character.

So in order to increase the memory of the pod running, we would run: –

kubectl patch pod mssql-statefulset-0 --subresource resize --patch `

'{\"spec\":{\"containers\":[{\"name\":\"mssql-container\", \"resources\":{\"requests\":{\"memory\":\"4000Mi\"}, \"limits\":{\"memory\":\"4000Mi\"}}}]}}'

And then if we check out the pod’s yaml: –

kubectl get pod mssql-statefulset-0 -o yaml

And there we are, cool!

So to do this for CPU, we would run: –

kubectl patch pod mssql-statefulset-0 --subresource resize --patch `

'{\"spec\":{\"containers\":[{\"name\":\"mssql-container\", \"resources\":{\"requests\":{\"cpu\":\"4000m\"}, \"limits\":{\"cpu\":\"4000m\"}}}]}}'

Ok, I appreciate this code isn’t exactly the easiest to type out! Thankfully we can now add a –subresource resize flag to an edit command: –

kubectl edit pod mssql-statefulset-0 --subresource resize

And this will allow us to update the CPU and memory limits/requests of the pod without a restart!

Thanks for reading!