With all the changes that have happened with VMware since the Broadcom acquisition I have been asked more and more about alternatives for running SQL Server.

One of the options that has repeatedly cropped up is KubeVirt

KubeVirt provides the ability to run virtual machines in Kubernetes…so essentially could provide an option to “lift and shift” VMs from VMware to a Kubernetes cluster.

A bit of background on KubeVirt…it’s a CNCF project accepted in 2019 and moved to “incubating” maturity level in 2022…so it’s been around a while now. KubeVirt uses custom resources and controllers in order to create, deploy, and manage VMs in Kubernetes by using libvirt and QEMU under the hood to provision those virtual machines.

I have to admit, I’m skeptical about this…we already have a way to deploy SQL Server to Kubernetes, and I don’t really see the benefits of deploying an entire VM.

But let’s run through how to get up and running with SQL Server in KubeVirt. There are a bunch of pre-requisites required here so I’ll detail the setup that I’m using.

I went with a physical server for this, as I didn’t want to deal with any nested virtualisation issues (VMs within VMs) and I could only get ONE box…so I’m running a “compressed” Kubernetes cluster, aka the node is both a control and worker node. I also needed a storage provider, and as I work for Pure Storage…I have access to a FlashArray which I’ll provision persistent volumes from via Portworx (the PX-CSI offering to be exact). Portworx provides a CSI driver that exposes FlashArray storage to Kubernetes for PersistentVolume provisioning.

So it’s not an ideal setup…I’ll admit…but should be good enough to get up and running to see what KubeVirt is all about.

Let’s go ahead and get started with KubeVirt.

First thing to do is actually deploy KubeVirt to the cluster…I followed the guide here: –

https://kubevirt.io/user-guide/cluster_admin/installation/

export RELEASE=$(curl https://storage.googleapis.com/kubevirt-prow/release/kubevirt/kubevirt/stable.txt) # set the latest KubeVirt release

kubectl apply -f https://github.com/kubevirt/kubevirt/releases/download/${RELEASE}/kubevirt-operator.yaml # deploy the KubeVirt operator

kubectl apply -f https://github.com/kubevirt/kubevirt/releases/download/${RELEASE}/kubevirt-cr.yaml # create the KubeVirt CR (instance deployment request) which triggers the actual installation

Let’s wait until all the components are up and running: –

kubectl -n kubevirt wait kv kubevirt --for condition=Available

Here’s what each of these components does: –

virt-api - The API endpoint used by Kubernetes and virtctl to interact with VM and VMI subresources.

virt-controller - Control-plane component that reconciles VM and VMI objects, creates VMIs, and manages migrations.

virt-handler - Node-level component responsible for running and supervising VMIs and QEMU processes on each node.

virt-operator - Manages the installation, upgrades, and lifecycle of all KubeVirt core components.

There’s two pods for the controllers and operator as they are deployments with a default replicas value of 2…I’m running on a one node cluster so could scale those down but I’ll leave the defaults for now.

More information on the architecture of KubeVirt can be found here: –

https://kubevirt.io/user-guide/architecture/

And I found this blog post really useful!

https://arthurchiao.art/blog/kubevirt-create-vm/

The next tool we’ll need is the Containerized Data Importer, this is the backend component that will allow us to upload ISO files to the Kubernetes cluster, which will then be mounted as persistent volumes when we deploy a VM. The guide I followed was here : –

https://github.com/kubevirt/containerized-data-importer

export VERSION=$(curl -s https://api.github.com/repos/kubevirt/containerized-data-importer/releases/latest | grep '"tag_name":' | sed -E 's/.*"([^"]+)".*/\1/')

kubectl create -f https://github.com/kubevirt/containerized-data-importer/releases/download/$VERSION/cdi-operator.yaml

kubectl create -f https://github.com/kubevirt/containerized-data-importer/releases/download/$VERSION/cdi-cr.yaml

And again let’s wait for all the components to be up and running: –

kubectl get all -n cdi

Right, the NEXT tool we’ll need is virtctl this is the CLI that allows us to deploy/configure/manage VMs in KubeVirt: –

export VERSION=$(curl https://storage.googleapis.com/kubevirt-prow/release/kubevirt/kubevirt/stable.txt)

wget https://github.com/kubevirt/kubevirt/releases/download/${VERSION}/virtctl-${VERSION}-linux-amd64

And confirm that it’s installed (add to your $PATH environment variable): –

virtctl version

Okey dokey, now need to upload our ISO files for Windows and SQL Server to the cluster.

Note I’m referencing the storage class from my storage provider (PX-CSI) here. Also, I could not get this to work from my desktop, I had to upload the ISO files to the Kubernetes node and run there. The value for the –uploadproxy-url flag is the IP address of the cdi-uploadproxy service: –

Uploading the Windows ISO (I went with Windows Server 2025): –

virtctl image-upload pvc win2025-pvc --size 10Gi \

--image-path=./en-us_windows_server_2025_updated_oct_2025_x64_dvd_6c0c5aa8.iso \

--uploadproxy-url=https://10.97.56.82:443 \

--storage-class px-fa-direct-access \

--insecure

And uploading the SQL Server 2025 install ISO: –

virtctl image-upload pvc sql2025-pvc --size 10Gi \

--image-path=./SQLServer2025-x64-ENU.iso \

--uploadproxy-url=https://10.97.56.82:443 \

--storage-class px-fa-direct-access \

--insecure

Let’s confirm the resulting persistent volumes: –

kubectl get persistent volumes

Ok, so the next step is to pull down a container image so that it can be referenced in the VM yaml. This image contains the VirtIO drivers needed for Windows to detect the VM’s virtual disks and network interfaces: –

sudo ctr images pull docker.io/kubevirt/virtio-container-disk:latest

sudo ctr images ls | grep virtio

The final thing to do is create the PVCs/PVs that will be used for the OS, SQL data files, and SQL log files within the VM. The yaml is: –

apiVersion: v1

kind: PersistentVolumeClaim

metadata:

name: winos

spec:

accessModes: [ "ReadWriteOnce" ]

resources:

requests:

storage: 100Gi

storageClassName: px-fa-direct-access

volumeMode: Block

---

apiVersion: v1

kind: PersistentVolumeClaim

metadata:

name: sqldata

spec:

accessModes: [ "ReadWriteOnce" ]

resources:

requests:

storage: 50Gi

storageClassName: px-fa-direct-access

volumeMode: Block

---

apiVersion: v1

kind: PersistentVolumeClaim

metadata:

name: sqllog

spec:

accessModes: [ "ReadWriteOnce" ]

resources:

requests:

storage: 25Gi

storageClassName: px-fa-direct-access

volumeMode: Block

And then create!

kubectl apply -f pvc.yaml

Right, now we can create the VM! Below is the yaml I used to create the VM: –

apiVersion: kubevirt.io/v1

kind: VirtualMachine

metadata:

name: win2025

spec:

runStrategy: Manual # VM will not start automatically

template:

metadata:

labels:

app: sqlserver

spec:

domain:

firmware:

bootloader:

efi: # uefi boot

secureBoot: false # disable secure boot

resources: # requesting same limits and requests for guaranteed QoS

requests:

memory: "8Gi"

cpu: "4"

limits:

memory: "8Gi"

cpu: "4"

devices:

disks:

# Disk 1: OS

- name: osdisk

disk:

bus: scsi

# Disk 2: SQL Data

- name: sqldata

disk:

bus: scsi

# Disk 3: SQL Log

- name: sqllog

disk:

bus: scsi

# Windows installer ISO

- name: cdrom-win2025

cdrom:

bus: sata

readonly: true

# VirtIO drivers ISO

- name: virtio-drivers

cdrom:

bus: sata

readonly: true

# SQL Server installer ISO

- name: sql2025-iso

cdrom:

bus: sata

readonly: true

interfaces:

- name: default

model: virtio

bridge: {}

ports:

- port: 3389 # port for RDP

- port: 1433 # port for SQL Server

networks:

- name: default

pod: {}

volumes:

- name: osdisk

persistentVolumeClaim:

claimName: winos

- name: sqldata

persistentVolumeClaim:

claimName: sqldata

- name: sqllog

persistentVolumeClaim:

claimName: sqllog

- name: cdrom-win2025

persistentVolumeClaim:

claimName: win2025-pvc

- name: virtio-drivers

containerDisk:

image: kubevirt/virtio-container-disk

- name: sql2025-iso

persistentVolumeClaim:

claimName: sql2025-pvc

Let’s deploy the VM: –

kubectl apply -f win2025.yaml

And let’s confirm: –

kubectl get vm

So now we’re ready to start the VM and install windows: –

virtctl start win2025

This will start an instance of the VM we created…to monitor the startup: –

kubectl get vm

kubectl get vmi

kubectl get pods

So we have a virtual machine, an instance of that virtual machine, and a virt-launcher pod…which is actually running the virtual machine by launching the QEMU process for the virtual machine instance.

Once the VM instance has been started, we can connect to it via VNC and run through the Windows installation process. I’m using TigerVNC here.

virtctl vnc win2025 --vnc-path "C:\Tools\vncviewer64-1.15.0.exe" --vnc-type=tiger

Hit any key to boot from the ISO (you’ll need to go into the boot options) but we’re now running through a normal Windows install process!

When the option to select the drive to install Windows appears, we have to load the drivers from the ISO we mounted from the virtio-container-disk:latest container

image: –

Once those are loaded, we’ll be able to see all the disks attached to the VM and continue the install process.

When the install completes, we’ll need to check the drivers in Device Manager: –

Go through and install any missing drivers (check disks and anything under “other devices”).

OK because VNC drives me nuts…once we have Windows installed, we’ll open up remote connections within Windows and then deploy a node port service to the cluster to open up port 3389…which will let us RDP to the VM: –

apiVersion: v1

kind: Service

metadata:

name: win2025-rdp

spec:

ports:

- port: 3389

protocol: TCP

targetPort: 3389

selector:

vm.kubevirt.io/name: win2025

type: NodePort

Confirm service and port: –

kubectl get services

Once we can RDP, we can continue to configure Windows (if we want to) but the main thing now is to get SQL Server 2025 installed. Don’t forget to online and format the disks for the SQL Server data and log files!

The ISO file containing the SQL install media is mounted within the VM…so it’s just a normal install. Run through the install and confirm it’s successful: –

Once the installation is complete…let’s deploy another node port service to allow us to connect to SQL in the VM: –

apiVersion: v1

kind: Service

metadata:

name: win2025-sql

spec:

ports:

- port: 1433

protocol: TCP

targetPort: 1433

selector:

vm.kubevirt.io/name: win2025

type: NodePort

Confirm the service: –

kubectl get services

And let’s attempt to connect to SQL Server in SSMS: –

And there is SQL Server running in KubeVirt!

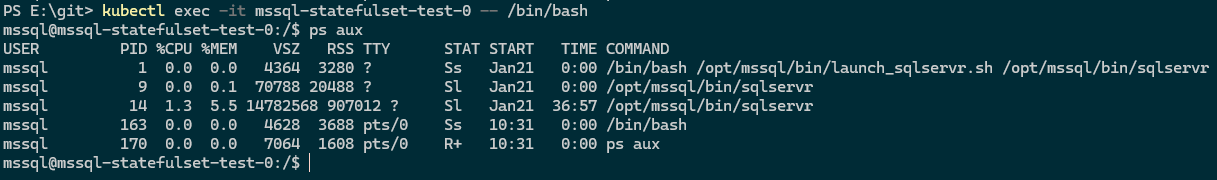

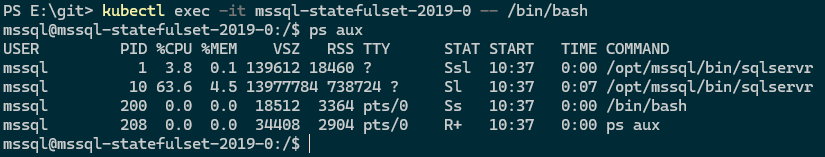

Ok, let’s run a performance test to see how it compares with SQL deployed to the same Kubernetes cluster as a statefulset. I used Anthony Nocentino’s containerised HammerDB tool for this…here are the results: –

# Statefulset result

TEST RESULT : System achieved 45319 NOPM from 105739 SQL Server TPM

# KubeVirt result

TEST RESULT : System achieved 5962 NOPM from 13929 SQL Server TPM

OK, well that’s disastrous! 13% of the transaction per minute achieved for the SQL instance in the statefulset on the same cluster!

I also noticed a very high CPU privileged time when running the test against the database in the KubeVirt instance, which indicates that the VM is spending a lot of time in kernel or virtualization overhead. This is more than likely caused by incorrectly configured drivers, so it’s definitely not an optimal setup.

So OK, this might not be a perfectly fair test, but the gap is still significant. And it’s a lot of effort to go through just to get an instance of SQL Server up and running. But now that we do have a VM running SQL Server, I’ll explore how (or if) we can clone that VM so we don’t have to repeat this entire process for each new deployment…I’ll cover that in a later blog post. I’ll also see if I can address the performance issues.

But to round things off…deploying SQL as a statefulset to a Kubernetes cluster would still be my recommendation.

Thanks for reading!