One of the new features that’s coming with SQL Server 2016 is Dynamic Data Masking. DDM is designed to allow DBAs to limit exposure of sensitive data within a database with minimal (if any) changes required to existing queries.

How often have you seen a column in a database…in plain text…called password?? ARGH.

I really like the idea of being able to mask data without having to change any existing queries in the database. But how does it work? Well, SQL doesn’t do anything to the data itself but when a select statement retrieves data a “masking” function is applied to the columns (I won’t blame you if you just rolled your eyes and said “well…duh” when reading that last statement). These functions can be:-

Default: Changes column values to xxxx

MASKED WITH (FUNCTION = 'default()'))

Email: Changes email address to XXXX@XXXX.com

MASKED WITH (FUNCTION = 'email()')

Random: Replaces numeric data with range of values specified. Example shown will replace data with values from 1 to 100.

MASKED WITH (FUNCTION = 'random(1,100)')

Custom: A user defined type that can mask characters specified. Example shown masks all characters apart from the first and last with XXX.

MASKED WITH (FUNCTION = 'partial(1,"XXX",1)')

These functions can be applied when the table is created or added afterwards by running a ALTER TABLE statement. But enough of me explaining, let’s create a database for testing: –

USE [master];

GO

CREATE DATABASE [DDM_Demo];

GO

Now we can create a table, specifying the masking function inline: –

USE [DDM_Demo];

GO

CREATE TABLE dbo.Users

(UserID INT IDENTITY(1,1) PRIMARY KEY,

Forename VARCHAR(20),

Surname VARCHAR(20),

Username VARCHAR(20),

Email VARCHAR(50) MASKED WITH (FUNCTION = 'email()'),

AccountPassword VARCHAR(50) MASKED WITH (FUNCTION = 'default()'));

GO

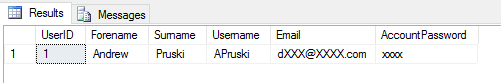

This will create a pretty simple table with the columns Email and AccountPassword masked with the email and default functions respectively. To see how they work insert some test data: –

INSERT INTO dbo.Users

(Forename,Surname,Username,Email,AccountPassword)

VALUES

('Andrew','Pruski','APruski','dbafromthecold@gmail.com','123456ABCDE');

GO

But I’m guessing your a sysadmin in your test instance (if you’re not, what’s going on there?) so in order to see the data masking in action, we need a new login & user: –

CREATE LOGIN [DDM_Login] WITH PASSWORD = 'testing', CHECK_POLICY=OFF,CHECK_EXPIRATION=OFF;

CREATE USER [DDM_User] FOR LOGIN [DDM_Login];

ALTER ROLE [db_datareader] ADD MEMBER [DDM_User];

GO

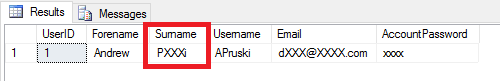

Now run a SELECT statement with under the new account’s credentials: –

EXECUTE AS USER = 'DDM_User';

SELECT * FROM dbo.Users ;

REVERT;

GO

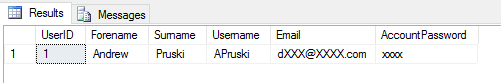

Cool! The data has been masked to the user. If we want this user to be able to see the data, we can run: –

GRANT UNMASK TO DDM_User;

GO

Now re-run the SELECT statement: –

EXECUTE AS USER = 'DDM_User';

SELECT * FROM dbo.Users;

REVERT;

GO

And now the user can see the data. To remove the access: –

REVOKE UNMASK TO [DDM_User];

GO

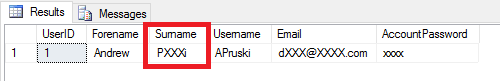

To add a new column with masking (this time using the custom function): –

ALTER TABLE dbo.Users

ALTER COLUMN Surname ADD MASKED WITH (FUNCTION = 'partial(1,"XXX",1)');

GO

EXECUTE AS USER = 'DDM_User';

SELECT * FROM dbo.Users ;

REVERT;

GO

The column has now been masked with a custom function. To remove the data masking function from the column, run: –

ALTER TABLE dbo.Users

ALTER COLUMN Surname DROP MASKED;

GO

N.B. – As far as I can tell, adding/dropping the data masking function is a meta-data only operation (if anyone knows otherwise, let me know!).

Obviously there are some limitations around columns that can have masking applied. Masking cannot be applied to: –

- Columns already encrypted

- Filestream

- Sparse columns

- Computed columns

There is a caveat with computed columns however, if a computed column depends on a masked column then the computed column WILL be masked. Also, masked columns cannot be part of a key for a full text index.

A final word of caution with this feature…THIS IS NOT ENCRYPTION! It is exactly what it says it is, a mask. This can be seen by having a look at the data on the page: –

DBCC IND('DDM_Demo','Users',1);

GO

DBCC TRACEON(3604);

GO

DBCC PAGE ('DDM_Demo',1,232,3);

GO

N.B. – For more info on DBCC IND & DBCC PAGE go here:- http://strictlysql.blogspot.ie/2010/08/dbcc-ind-dbcc-page-intro.html

The data can be seen in plain text on disk, the masking function only comes into play when reading the data. I’ve been playing around with this and, I can’t see any huge performance impact incurred when having to mask the data. SQL does report a extra operator when reading the data: –

GRANT SHOWPLAN TO DDM_User;

GO

EXECUTE AS USER = 'DDM_User';

SELECT * FROM dbo.Users ;

REVERT;

GO

That COMPUTE SCALAR operator isn’t there usually when running a bog standard select against a table but I’ve tried inserting 1,000,000 rows into the table, selecting from it, then dropping the masking, re-running the select and comparing the performance statistics but cannot see any (significant) impact. Here’s the code I used to test (have a go at running it yourself): –

--Switching off performance metrics for the inserts

SET STATISTICS TIME OFF;

SET STATISTICS IO OFF;

SET NOCOUNT ON;

GO

--Inserting a load of rows to the table

--I know this is horrible code!!!!!!!!!!!!!!!!!!!!!!!!!

DECLARE @Counter INT = 0;

WHILE @Counter <= 1000000

BEGIN

INSERT INTO dbo.Users

(Forename,Surname,Username,Email,AccountPassword)

VALUES

('TestForename' + CONVERT(SYSNAME,@Counter),

'TestSurname' + CONVERT(SYSNAME,@Counter),

'TestUsername' + CONVERT(SYSNAME,@Counter),

'testemail' + CONVERT(SYSNAME,@Counter) + '@gmail.com',

'TestPassword' + CONVERT(SYSNAME,@Counter));

SET @Counter = @Counter + 1

END

--Allowing the user to see the execution plan

GRANT SHOWPLAN TO DDM_User;

GO

--Cleaning out the data from the buffer pool

DBCC DROPCLEANBUFFERS;

GO

--Running the initial select

SET STATISTICS IO ON;

SET STATISTICS TIME ON;

EXECUTE AS USER = 'DDM_User';

SELECT * FROM dbo.Users ;

REVERT;

GO

--Dropping the masking

ALTER TABLE dbo.Users

ALTER COLUMN Email DROP MASKED

ALTER TABLE dbo.Users

ALTER COLUMN AccountPassword DROP MASKED;

GO

--Cleaning out the data from the buffer pool (again)

DBCC DROPCLEANBUFFERS;

GO

--Re-running the SELECT without the masking

SET STATISTICS IO ON;

SET STATISTICS TIME ON;

EXECUTE AS USER = 'DDM_User';

SELECT * FROM dbo.Users ;

REVERT;

GO

I have seen a minor increase in execution times but nothing that makes me worried. I am aware that this is a very simple test so any “gremlins” may not be apparent so as ever with a new feature, if you’re going to implement it, get it on a test system and hammer the thing!

Thanks for reading.